ALSO

The story is told through the camera, being used to manipulate the elements while letting the viewer know what they need to focus on and how they might feel. Get out there and have a go. The camera is used to manipulate the elements of the scene, focusing viewers on what they need to see and know, how they feel about what is going on – the story. The composition of a shot affects the mood, sets what elements in a shot are visible and most important.

OCCLUSION

The effect of blocking the light source.

CAMERA SETTING OPTIONS in MAYA

There are two ways to create a new camera in Maya one from the panel’s menu with Panels, Perspective placing the camera away from the origin like a default Perspective camera. The other via the Create menu placing the camera at origin.

Camera’s new point of interest.

CAMERAS

- Camera (single node)

- Camera and Aim (double node, camera and aim node): useful for following an object with the camera pivoting to follow its aim point

- Camera, Aim and Up (triple node, camera, aim and up node): to orient the camera’s up position: making it possible to animate the the side-to-side rotation of the camera

- Stereo

- Multi Stereo Rig

- Stereoscopic or stereo camera to render 3D movies

- Orthographic, lack perspective and do not have a vanishing point

- Adjusts the background colour

- Image Planes for the background for that particular camera or view panel, can import video as an image sequence

DISPLAY OPTIONS

- Resolution Gate: renderable area

- Film Gate: display only

- Overscan: does not change the rendered image, only the display, can resize the view without changing the film gate that will render.

CLIPPING PLANES

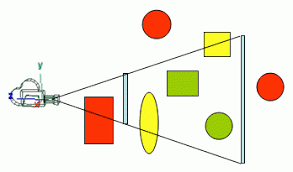

Near and far clipping planes are imaginary planes located at two particular distances from the camera along the camera’s sight line. They determine how much of a scene is seen by the camera in the viewport. Only objects between a camera’s two clipping planes are rendered in that camera’s view. Any parts of objects in the scene closer to the camera than the near clipping plane or farther from the camera than the far clipping plane do not appear in the viewport. If object disappear when moving the camera it may be because of the clipping planes.

If clipping planes are set far apart and there are a number of objects clustered together, each object will have a depth value that is very similar to others. If these depth numbers are rounded-off, the values can become identical, resulting in artefacts.

Auto Render Clip Plane will automatically set the near and far clipping planes most optimally depending on where objects are in the scene. This may compromise Z-depth information for another purpose. When Auto Render Clip Plane is Off the camera’s values will be used.

FRUSTUM

Is the volume that contains everything that is potentially (there may be occlusions) visible on the screen. This volume is defined according to the camera’s settings, and when using a perspective projection takes the shape of a truncated pyramid.

The type of clipping occurring at the far clipping plane is a the object level, not at the triangle level. Geometry will be cut the near clipping plane.

Film Back 101 WHAT IS FILM BACK AND WHY DO I NEED TO KNOW?

The film back corresponds to the size of the film negative, measured in millimeters.

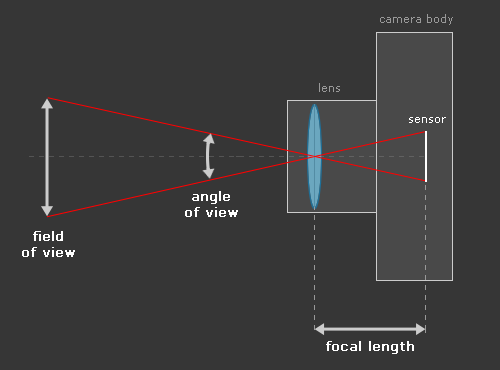

“Film back” is common terminology for the dimensions of a film frame’s or electronic sensor’s imaging area. Focal length is the optical magnification power of a lens. The field of view (FOV) (aka angle of view or viewing frustum angle) will be different on cameras with the same film back sizes using lenses of different focal lengths. The FOV will also be different if the cameras use lenses with the same focal lengths, but have different film back sizes. FOV is determined by the relationship between film back and focal length.

In a real camera, the size of the film back determines how wide a given focal length of lens looks. Like a real camera, Maya uses the relationships between focal length, sizes of objects, and the scale of the film back to determine how the field of view and depth of field should look.

ASPECT RATIO

Each image is made up of pixels and pixels have an aspect ratio

- square pixel aspect ratio x/y = 1/1 =1.0

- non-square pixel aspect ration x/y = 1/1.1 = 0.9

- device aspect ration, device aspect = image aspect x pixel aspect, 1.48 x 0.9 = 1.33

- film aspect ratio, describes the region defined by the film gate, the camera aperture attribute represented as a ratio, film aspect = x/y = 0.816/0.612 = 1.33

ANGLE OF VIEW

FOCAL LENGTH

Changing the focal length and the angle of view changes. As the focal length is extended the angle of view gets narrower, shorten and the angle of view gets larger.

Specifies the length of the lens, the lower the focal length (short lens) the wider the view, with a very low number distorting the image. Higher focal lengths the subject seems closer to the camera. Focal-length zooming can create optical distortions as opposed to trucking the camera, physically change the position of the camera is different. A zoom lens, however, makes the subject larger or smaller within the frame simply by shifting the lens elements inside to change focal lengths. When matching CG to a photograph or video match the camera’s focal lengths.

Understanding Focal Length – usually represented in millimeters (mm), is the basic description of a photographic lens. It is not a measurement of the actual length of a lens, but a calculation of an optical distance from the point where light rays converge to form a sharp image of an object to the digital sensor or 35mm film at the focal plane in the camera. The focal length of a lens is determined when the lens is focused at infinity.

The focal length tells us the angle of view—how much of the scene will be captured—and the magnification—how large individual elements will be. The longer the focal length, the narrower the angle of view and the higher the magnification. The shorter the focal length, the wider the angle of view and the lower the magnification.

Things to consider:

- angle of view, how much of the scene will be captured

- magnification, how large individual elements in the image will be

- narrow angle of view has higher magnification

- wide angle of view and lower magnification

- wider angle of view stretches the edges of the frame

- compression, appearance of objets being closer to each other than they actually are

Understand Focal Length in 5 Minutes In short, the focal length of the lens is the measure of how “zoomed in” your lens is. Much like looking through binoculars, you may be at 40 mm and be able to see an entire mountain, or zoom in to 400mm and only see one tree on the mountain. The lens is being repositioned in the camera resulting in changing the distance between the lens and the film gate. Increasing the focal length, objects appear larger, the camera zooms in and the wider area decreases. Decreasing the focal length, the lens moves back toward the film gate, increasing the viewable area and objects appear smaller.

The focal length measurement tells the photographer what the angle of view will be. The angle of view means how wide of an area is visible in the picture. It also conveys the magnification of far-away objects in the photo.

The focal length of the camera has a big impact on the mood of the scene. Adjusting the focal length affects the zoom meaning it can flatten or exaggerate the perspective of the scene. This can increase the drama by exaggerating the perspective or create a different type of mood by flattering out the view, reducing the perspective which distortion resulting in creating large elements that feel distant.

APERTURE

The camera aperture relates to the focal length in that different backs have different normal lenses. A normal lens focal length is not telephoto or wide-angle. It closely approximates normal vision. As teh size of the camera aperture increases, a longer focal length is required to achieve normal perspective. This is why a 35mm camera uses a 50mm lens would appear to be telephoto in nature. A normal focal length for a camera is a focal length that equals the diagonal measurements of the camera aperture in millimetres, which means finding the hypotenuse, is the longest side of a right-angled triangle.

DEPTH of FIELD

Is a photographic effect, where objects, within a certain range of distance, remain sharply focused.

F Stop value represents the distance in front of and behind the focus distance that will remain in focus. A low value represents a short distance that will be in focus. A high value results in very little blur due to the deeper range in focus. The lower the Fstop value, the smaller the in focused region will be.

A camera’s exposure settings determine depth of field (the region of sharp focus), and whether or not subject matter is crisp or blurred by motion. It is the distance between the nearest and farthest objects in a scene that appear acceptably sharp in an image, the decrease in sharpness is gradual on each side of the focused distance. Everything in focus is a large depth of field or deep focus and a small depth of field or shallow focus. Depth of field can help separate the background from the foreground elements in the overall composition, add to storytelling and increasing the sense of depth.

To create an image that has wider depth of field (more area in front of and behind the subject that is in focus) use high fStop values, such as f16, f22, f32. To achieve narrow depth of field (less area in front and behind the subject that is in focus) use low fStop values such as f2.8, f4 or f5.6. For more information on fStops, see fStop (aperture) and shutter speed/angle. Depending on the fStop and Focus Region Scale values, parts of the object may or may not be in focus.

Adjusting Depth of Field can create a more realistic mood. Measure the distance between the camera and the subject. In the camera’s Attribute Editor, under Depth of Field section enter the distance. F Stop is used to determine whether the iris will be opened or closed, determining how much of an Out Focus effect will be applied. A lower value increases the Out Focus effect. Focus Region Scale manually configures the focal region. A large value corresponds to a greater focal region.

IMAGE PLANES

Are 2D texture mapped planes that are connected to a camera, perpendicular to the lens axis and can be used for such things as creating environments, tracing concept sketches or matching live action. Can be attached directly to the camera or cameras building the nodes and connections.

To Size attribute on the image plane will alter the original aspect ratio of the image to make it fit the aspect ratio of the film gate.

MATCHING LIVE ACTION

Refers to the process of positioning and animating objects in a scene relative to a background live action sequence of images. The key to matching live action is to understand the meaning of several different aspect ratios. The resolution gate and film gate aspect ratios need to match precisely.

MOTION BLUR

Is an optical phenomenon that occurs when an object moves fast in front of a camera with the object looking blurred as it crosses the frame. The shutter angle determines the blur path length. The Number of Exposures is how may samples are calculated. Attributes include Blur By Frame, Blur Length in the Render Settings and the camera’s Shutter Angle under the camera’s Special Effects.

Motion blur is the streak-like effect that occurs when shooting a still image or video, because your subjects are moving rapidly through the frame, or the camera exposure is particularly long (i.e. time-lapse photography). This effect can be found in human eyes as well. If your eye moves past an object (or vice versa), the image will have a motion blur, unless you’re tracking the object at the same speed, which is called “smooth pursuit” When an object changes position while the shutter is open, the movement shows up as a blur.

Backlit: Shining a light on those who work behind the camera

BACKLIT is a collaborative effort between WIFVNE and High Output Academy with a mission to shine a light on those working behind the camera.

The BACKLIT videos aim to demystify the industry as a whole and the Lighting and Grip & Electric departmentsspecifically. The goal is to empower and inspire those who are interested in being involved in the industry and to showcase experience and insight from established professionals. This is accomplished in two ways: by providing a low-stress/high engagement on-set “learning lab “experience, and by producing videos for distribution through High Output and WIFVNE channels.

HDRI IMAGES

HDRI is a panoramic photo, which covers all angles from a single point and contains a large amount of data (usually 32 bits per pixel per channel), which can be used for the illumination of CG scene. True HDRI will be in a format that can contain many data (HDR/EXR/TIFF). It will look just like any other image, until you adjust the exposure, and discover that it secretly stores much more vivid colors than you could see before.

HDR Shop is an interactive graphical user interface image processing and manipulation system designed to create, view and manipulate High-Dynamic Range images.

PTGui is panoramic image stitching software for Windows, macOS and Linux. Originally started as a Graphical User Interface for Panorama Tools (hence the name), PTGui has evolved into a full featured, industry leading photo stitching application.

3D LENS FOR DSLR CAMERA and SMARTPHONES

The Kúla Deeper stereo lens is attached to a camera lens thread for high quality stereoscopic 3D photography.

- Use existing lens features like VR, autofocus and metering.

- View images in 3D on the camera display using the included stereo viewer

- Generate any 3D format using the accompanying image processing software Kúlacode

- Compatible with Nikon, Canon, Sony, Sigma and the rest of the gang.

Kúla Bebe 3D lens is attached to any smartphone with a simple clip. It comes with a paper stereoviewer for smartphones, the CinemaBox for viewing the 3D content right away. To make sure you have the fun you deserve, Kúla Bebe also comes with old school red/cyan anaglyph glasses. Kúla Bebe is in production and the limited first batch will be delivered autumn 2017.

DON’T LET LEDs RUIN YOUR SHOOT

We often talk about how important it is to get your exposures correct, and rightly so; correct exposure is fundamental to the creation of good images. There’s no question. It’s Page 1 Day 1 of Cinematography. We make sure to always have a light meter with us to ensure what we’re intending to commit to film is… as intended. Today we have powerful new tools, like false color and waveforms, to check our values as well. We can easily make sure, even by just looking at the monitor, that our brightness and contrast is exactly where we want it to be.

Disney’s Robotic Stuntman is Insane

It’s a bird, it’s a plane, it’s Disney’s robot?

A WIDER LENS: AUSTRALIAN CAMERA WORKFORCE DEVELOPMENT AND DIVERSITY

DP REVIEW

DPReview has been leading the way in digital camera reviews and information since 1999, and this page is your launch pad for all our camera-related content. You’ll find everything you need to choose – and get the most from – your digital camera on these pages. Use the links below to check out our famous in-depth camera reviews and group tests, search for a camera with the features you want, compare two or more camera models or simply browse camera specifications, user reviews, sample galleries and pictures.

News & Articles

6K RAW In-Camera

Making of Mad Max: Fury Road from ACS Victoria with John Seale ACS ASC and David Burr ACS

NDI®|HX Camera for Android Users

On this episode of NDI TV we speak to Adam Taylor aka EposVox, about the all new NDI®|HX Camera for Android app.

Cinematography is still hard

Vary your video composition: 10 types of camera shots

Until you’ve experienced being on the other side of the camera, moviegoers might not realize that they’ve been engaged throughout the storyline because of the range of camera shots in the film. It’s not just writing and acting that pushes the narrative forward (don’t get me wrong — they’re very important too!), but the actual composition of each frame can mean something and keep viewers interested.

Essential Guide: HDR For Cinematography

Although we’ve been using camera log curves, in the guise of gamma, for as long as we’ve been broadcasting television, the real impact they provide has started to become apparent as we move to HDR. Not only do they form a type of video compression, the camera log curves also add to the aesthetic quality of the image and to get the best out of HDR broadcast engineers, technologists, and their managers, must all understand the impact of this technology.

The use of Motion Capture (MoCap) is no anomaly in the world of VFX. For years, the technique has seen actors suiting up in professional spandex and painting dots on their faces, all to capture their expressions and movements so they can live on through their animated counterparts. But now, thanks to machine learning, this process may be set to change.

Microsoft’s undersea data center now has a webcam with fish swimming past 27.6 petabytes of data

If you’ve always wanted to watch fish swim past a data center with 27.6 petabytes of storage, stop surfing around as today is your lucky day. Microsoft has taken the oppor-tuna-ty to install a webcam next to its undersea data center, offering live views of just how well the metal container is rusting and the hundreds of fish suddenly interested in cloud data and artificial intelligence. The software maker originally sunk a data center off the Scottish coast in June to determine whether the company can save energy by cooling it in the sea itself, or if it should leave it to salmon else.

SAM CAHILL, #ADOBECREATIVESIDE INFLUENCER

I use many different effects for my videos, but the hyperzoom is one of my favorites. I’ve made a tutorial on how to achieve the effect.

Cinematographer Toby Oliver on shooting ‘Get Out’

I’d done Wolf Creek 2 with Greg McLean here in Australia, and then I moved to the US. I’ve got two kids, teenagers now, so it was a big call and there was risk involved, but I was lucky. Soon after I got there, within two or three months, Greg rang up and said, I’m doing a Blumhouse movie – are you interested? I did a good job on The Darkness – Greg was happy and they were happy – and then they asked me to do some reshoots, which Blumhouse almost always do. They’ll change the ending or fix something that’s not quite right.

Toby Oliver – Shooting Get Out

Toby Oliver – Shooting Get Out

Making his feature debut behind the camera on 1994’s black and white prison drama, Everynight… Everynight, Toby Oliver has worked in a wide variety of genres and modes over the subsequent couple of decades, having shot the likes of Looking for Alibrandi, Tom White, the teen series Lockie Leonard, Last Train to Freo, and Beneath Hill 60. But of late he’s been in demand as a cinematographer of horror, culminating in his work on the most successful and culturally resonant chiller in recent memory, Jordan Peele’s Get Out.

The Power of Shooting with a Shallow Depth of Field

Here’s how shooting with a shallow depth of field can immerse your audience like nothing else. Choosing how you will shoot your project might be just as hard as writing the script. With all the different lenses and cameras available today, choosing the right piece of equipment can be difficult. The creator and director of 2017’s The Handmaid’s Tale is a shining example of knowing exactly how to communicate ideas and tell stories — all through the lens.

The Difference Between the Zoom and Dolly Shot

Many aspiring directors and filmmakers conflate the zoom and dolly shot. You’ll even see video supercuts online that claim to be a collection of zooms but also contain dolly shots or the combination dolly zoom shot. All of these camera shots can be effective: while some directors use these shots to create comic moments, others aim for surprise, intensity, visual turmoil or nostalgic homage to classic films. The zoom and the dolly shot contain subtle differences and are created with different techniques. How do you tell the difference between them? Which one is more appropriate for your next short film or feature? The below guide will help you learn more about these camera shots and filmmaking terms.

ZACUTO What we do, innovate inspire create. USA-made, production-grade, filmmaking camera accessories and rigs are designed by filmmakers for filmmakers. We create products designed to make your life easier and your long shooting days more comfortable and streamlined.

Our line of Next Generation Recoil rigs are all about perfect balance for all day shooting. With a Zacuto Recoil rig you can take your hands away and have the rig stay balanced on your shoulder. Don’t try this with a competitor rig!

Aerial Film Australia

Airstoc – Professional Drone Aerial Footage Marketplace.

Avengers: Age of Ultron Scenes Shot with BlackMagic PCC. Cinematographer Ben Davis used Blackmagic Design Pocket Cinema Camera for much of the footage for super hero blockbuster.

Canon’s new $30,000 video camera can see where you can’t

Cinematographer John Seale Rolls Down ‘Fury Road’

Top Cinematographers Reveal Their Favourite Movie Moments

A beginner’s Guide to Cinematographers

Drone Production Company – Fancy Drone Camerawork

Daniel Smukalla: Director of Photography Seoul, South Korea.

DJI Inspire 1: Everything you need for aerial filmmaking, integrated into an elegant, ready-to-fly system.

Edgertronic Affordable High-Speed Camera Will Officially Be Released

Kick Starter: Color 1280×1024 High Speed Video Camera – Up to 17,791 Frames/Sec. – Powerful – Easy to Use – Price Starting Under $5K

GoPro Shrinks the Camera Again: Hero4 Session Review. It’s not GoPro’s best-quality camera, but it’s simple to use and the size of an ice cube; $400 sticker shock

Best video camera for under $6000

Pocket Cinema Camera 6K Captures Motion and Vision of the Tiniest World

TOP 10 VIDEO CAMERAS OF 2022

This is revolutionary: The world’s first liquid lens lightfield camera

The coming revolution in holographic video technology has been given a boost with the unique approach of Spanish start-up Wooptix. It has presented the first single lens light field camera capable of capturing light field video in real time and it does so using a liquid lens. The camera is called SEBI and incorporates a hardware controlled liquid lens capable of capturing the same image at multiple depth-of-field settings. SEBI is also able to generate a depth map in real-time whilst recording. By using the depth map users can refocus at will within the scene. Blur and focus areas can be modified by controlling the depth of field. Alternatively, an ‘all in focus’ image can be displayed. The company says its tech could have multiple applications including in film and TV.

Blackmagic – Pocket Cinema Camera 4K

Next generation 4K camera with dual native ISO up to 25,600, full 4/3 HDR sensor, 13 stops of dynamic range, ProRes or RAW recording to internal SD/UHS-II and CFast cards, as well as a USB‑C expansion port for recording to external drives and more.

Roland’s New iOS App Turns A Bunch Of iPhones Into A Multi-Camera TV Studio

Roland’s New iOS App Turns A Bunch Of iPhones Into A Multi-Camera TV Studio

One thing that sets your home movies apart from what you see on TV and in the movies is that professionals film a scene from multiple angles to add drama and complexity to the final edit. It’s an extra step that can add a lot of impact, and with Roland’s new 4XCamera Maker app you can take advantage of all your friends’ iPhones instead of renting or buying multiple cameras for a shoot.

China’s new ‘super camera’ can instantly pinpoint specific targets among tens of thousands of people

China’s new ‘super camera’ can instantly pinpoint specific targets among tens of thousands of people

Scientists have unveiled a 500 megapixel cloud camera system in China that they say is capable of capturing the facial details of each individual in a crowd of tens of thousands of people, raising fears facial recognition monitoring could soon reach a new level.

Projection Mapping – through the lenses: audi a3 sportback behind the scene

Bret Price – Aerial Photography Specialist

The Surprisingly Fascinating World of Frame Rates

Sedae

The Changing Shape of Cinema: The History of Aspect Ratio

SpaceX strapped a GoPro inside a Falcon 9 rocket and captured this spectacular footage of Earth.

INFRARED CINEMATOGRAPHY

‘BRINDABELLAS | edge of light’ features the sky and landscapes of the Canberra region of Australia – in particular the Brindabella Ranges – captured in monochromatic (near) infrared. This feature-length film (140+ minutes in total) focuses on the interplay of mountain light, air and water as these elements are transformed across the seasons – from clouds to mist, rain and snow – then frost and ice – and onto creeks and rivers. It explores both the wider montane vistas of the Brindabellas and the more intimate details of the natural flows that are created by these mountains and, in turn, shape the very landscapes they arise from.

40 Incredible Examples Of Infrared Photography Because everyday objects reflect infrared in proportions that differ sharply from that of visible light, the tonal relationships are wildly unexpected. Such near-infrared techniques used in photography give subjects an exotic, antique look. Green vegetation becomes white, whereas human skin becomes pale and ghostly. The resulting images look alien.

Exploring Infrared Cinematography opens up a whole new spectrum of light not visible to the unaided eye. This has the potential to give otherwise ordinary scenes a surreal and dream-like appearance. In this article, we explore several of the unique applications and technical hurdles.

How to Interpret Common False Color Images Though there are many possible combinations of wavelength bands, the Earth Observatory typically selects one of four combinations based on the event or feature we want to illustrate. For instance, floods are best viewed in shortwave infrared, near infrared, and green light because muddy water blends with brown land in a natural color image. Shortwave infrared light highlights the difference between clouds, ice, and snow, all of which are white in visible light.

CAPTURING REALITY

Description: Photogrammetry has come a long way in the past 5 years and capturing reality is enabling artist around the world to not only save time and money but getting them out from behind the screen and sending them to locations around the world to capture everything from entire architectural elements to scanning forests and landmarks across the planet. Once reserved to a few studios with the technology to do accurate scans Capturing Reality puts the tools of accuracy and speed in your hands to capture and replicate real-life assets with price tag that makes sense.

Timothy Hanson, a VFX supervisor with nearly 20 years experience on projects like Avengers Age of Ultron, Fast and the Furious 8, The Walking Dead to name a few. Currently on Marvels Cloak and Dagger at Zoic Studios. Tim used RC for photogrammetry.

Importing of images from DSLR and drone photography, setting up the initial solve, how to use control points to get the drone images and the DSLR to solve until there is only one master component remaining, meshing process and cleanup of geo, generating UV’s.

MOTION CAPTURE

BREKEL Tools for Markerless Motion Capture

FACESHIFT STUDIO is a facial motion capture software solution which revolutionizes facial animation, making it possible at every desk. The software analyzes the facial movements of an actor and describes them as a mixture of basic expressions, plus head orientation, and eye gaze. This description is then used to animate virtual characters for use in any situation where facial animation is required, such as movie and game production.

VICON Global leader in Motion Capture Cameras, software and Mo-cap systems for the VFX, life science … Vicon technology used to create groundbreaking AAA game …

Motion Capture Tutorial (Xbox Kinect)

Method Studios Dance Up a Storm for Japan’s DoCLASSE

Fashion retailer DoCLASSE in Tokyo wanted to launch their new thermal jacket – ‘Magical Thermo’ – that is as warm as down but keeps a slim stylish silhouette. For the brand’s first commercial, the company chose Method Studios’ design team in New York to create a fun lively spot that would strike a chord with its target market, either at DoCLASSE’s shops across Japan or online. Motion capture data from various dance moves served as the base animation for the characters, and then Method’s artists cleaned up the data and simulated the cloth and fur. With the post-production compositing and animation in mind, Jon directed the model through the live action elements on a green screen stage in Tokyo.

The use of Motion Capture (MoCap) is no anomaly in the world of VFX. For years, the technique has seen actors suiting up in professional spandex and painting dots on their faces, all to capture their expressions and movements so they can live on through their animated counterparts. But now, thanks to machine learning, this process may be set to change.

Adventure of a Lifetime – behind the scenes on the set of Coldplay’s new video

Adventure of a Lifetime – behind the scenes on the set of Coldplay’s new video

Guardian photojournalist Sarah Lee was granted exclusive access to the set of Coldplay’s groundbreaking, Mat Whitecross-directed music video at the Imaginarium, a digital motion capture studio set up by Andy Serkis and Jonathan Cavendish in London

The world’s largest and most advanced volumetric video capture and creation facility: Intel Studios

Located in the heart of the entertainment industry in Los Angeles, Intel Studios features the world’s largest volumetric video stage and a comprehensive post-production and control facility powered by the latest technology. Intel has spent over a year building out the 25,000 square-foot studio with 10,000 square feet dedicated just for video capture in real time. To house all the data generated from shooting, there are 10 petabytes of local storage. With this process you can make 360-degree real-time video and choose exactly the perfect angle and picture frame you want to shoot.

CAMERAS

LinkedIn Discussion: 2.66:1 VS 2.35:1

I am working on a movie now, and the DOP chooses to shoot with Alexa XT PLUS and Alex Mini with anamorphic lenses, the setting is 2.66:1. However, our distributor would like to release the movie in 2.35:1. Our DOP says we can just crop the left and right sides of the picture to fit in. I am a bit confused. Why not just drop the anamorphic lenses, and shoot in 2.40:1 ? Is the image way better using anamorphic lenses? Hope to hearing your inputs. Show less

Elements of Cinematography: Camera

EIGHTEEN-PERCENT GREY

Is when equal amounts of what and black paint are mixed together.

LIGHT METERS

Reflected and incident

H and D CURVES

Toe and shoulder

Highlights, middle tones and shadows.

Contrast or tonal difference between the highlights and shadow areas. Is dependent on the amount of exposure of the shadows as will as development.

Density is the difference between the amount of light striking the film and the amount of light that actually passes through the film or how much transmitted light passes through the film. The differences in the density determine its contrast.

Each zone receives withe twice or half the light of the some before or after.

Film is 24 fps and video is 25 or 30 fps. Film cameras can be overcranked to higher frame rates and when played back at normal frame rates the images appear slower or undercranked, where the footage appears faster when played back. The shutter exposure is 1/48th of a second, half the speed is 12 fps with the exposure increased to 1/24th of second, doubled is 48 fps with the exposure reduced to 1/96th of a second.

Film/light sensor where the light-sensitive material of the camera is located.

Lens, optical part of the camera focusing the rays of light onto a recording media such as film which then runs through a film gate which consists of the pressure plate and the aperture plate which contains the film aperture, in front of the aperture is the shutter. A camera lens contains multiple lenses, elements for focusing the light onto the plane or back of the camera, an iris controlling the size of the opening or aperture setting which is controlling how much light is getting into the camera. The film aperture defines the shape of the image on the film.

Shutter controls the amount of light that enters the camera through the speed at which it opens and closes using varying amounts of time for light exposure, does not control the amount of light. Increasing the shutter speed decreases the exposure, decreasing the shutter speed increased the exposure, how long light flows. 1/8th of sec, 1/12,000th of a second, controlling the motion of objects. Slow shutter speeds slow objects if moving faster than the shutter speed. Leaf or focal plane shutter and movie cameras use a rotating circular shutter with a 180º cut out.

Aperture or diaphragm controls the amount of light entering the lens, with variable opening, the size of the opening. Changing this opening changes the amount of light that passes through, controller of intensity or brightness of light. The f-stop is the number that equals the focal length of a lens divided by the diameter of the effective aperture, or relative aperture. f-8 to f/11 the light is halved, or from f/11 to f/8 the light is doubled, f/2.8 is faster than f3.5, with each change in exposure representing a “stop”. The aperture also controls the depth of field.

Critical Plane Focus which is where the actual focus of the lens falls.

Depth of fields the area or region that has the sharpest focus, the near plane and the far plane bracket the depth of field. This is not the Critical Plane Focus which is where the actual focus of the lens falls. The depth of field lies before and after the critical plane focus, functioning as a regional sharpness compressor and expanders dictated by the aperture opening. Wide apertures (smaller f stops numbers) have a narrow depth of field because the far and near planes of focus are closer to each other. Small aperture (larger f stop numbers) the depth of field increases with the near and far planes moving further apart resulting in more areas of the scene in sharper focus.

Focus control mechanism controls the distance of the lens to the film either moving the lens assembly away from the film plane or towards

The focal length is the distance between the centre of the primary lens and the film. A shorter length or wider focal length gives a more pronounced perspective and larger sense of depth with more of the scene visible in the image, about 24mm or less. A longer focal length produces images that exhibit a flatter look with less sense of depth and less of the scene, about 50mm or greater. Prime lenses have a fixed focal length. Variable or zoom lenses where the focal length can change.

When you zoom in it looks like you walked towards the subject with a prime lens, it is a two dimensional move that is simply magnifying the image. Dolly move is a three dimensional move that displaces objects in the frame. Zooming and dollying in have different relationships of foreground and background elements, they have different perspectives. Where depth is concerned, parallax dictates that moving a camera forward (i.e. tracking shot) distorts closer objects more prominently than those further away from view; however, zooming in effectively works as a crop by affecting the entire image in equal measure (note the size of the vinyl record in the images below). Check the relationship between the foreground and background, If they do not move independently then it is probably zoomed.

When an image is in focus the lens is bending the light so that the beams of light converge exactly at the film plane otherwise it will be blurry or out of focus. The light from closer objects enters the lens at different angles than light from distant objects meaning it is possible to have some objects in focus and some out of focus. Having a sharp photograph is dependent on something called “depth of field”. To put it simply, depth of field is the amount of the photographic scene that is in focus, or sharp. It can be greater, with much of the scene in focus, or shallow, where very little is in focus. Depth of field changes with the angle of the camera’s lens, the distance from the lens to the objects in the image, and the camera’s settings. It is especially dependent on the aperture. (Remember aperture is the size of the opening of a camera’s lens, and it controls the amount of light entering into the lens at one time.)

Nodal Point or optical centre is at the centre of the lens. When a camera moves or translates the resulting image will exhibit parallax when the camera pans or rotates there is not parallax, rotating around its nodal point. If the pivot point is the tripod it is not the optical centre in the lens. 3D cameras are mathematically perfect cameras and they need to reflect a small amount of parallax. Measure the focal distances and distances to the subject from the nodal point of the lens.

Film Backs and CCDs, the film aperture is directly in front of the film, the size and shape of this opening dictates the size and shape or format of the image. The film back and focal length together help define the filed of view which represents what section of the scene will be visible with a particular lens and film aperture configuration. The Angle of View measures the 360º visible circle and is expressed in degrees. A longer lens has a lens centre further away from the film back giving a narrower angle of vision.

Digital cameras record incoming light as an electrical signal, CCD with its shape defining the shape of the image. With smaller digital sensors affecting how focal lengths are measured and changing the relationship between the lens and the sensor different lenses are required to create the same image on the digital film back.

Lens Distortion causes images to become stretched or compressed near the edges of the frame, inwards is barrel distortion, outwards or pincushion distortion. One type of distortion is known as “Barrel Distortion.” Barrel distortion is a side effect of the round glass elements within a lens and the effect of bending light more near the edges of the lens then we encounter near the center of the lens. The smaller the lens diameter gets the more drastic the effect of Barrel Distortion becomes. It occurs more with wide-angle and zoom lenses.

Making of Mad Max: Fury Road from ACS Victoria with John Seale ACS ASC and David Burr ACS

THE HOBBIT, Production Diary 4

VR PRODUCTION

Staples VR is a complete VR production studio providing both end to end solutions and consultation services for a variety of clients and industry leaders. We operate with the newest and highest quality equipment for image capture and 360 degree VR capture such as the Jaunt, Nokia OZO, Red, Arri, Blackmagic, Z-Cam, Sony, Panasonic and custom built solutions such our world fire fire proof VR capture system, and our underwater and aerial solutions. We specialise in dynamic camera movement, from aerial drone, cable cam and crane to dolly and underwater, enabling us to add that something special to your production. We pride ourselves on our ambisonic audio capture and offer full spatial post production mixing and sound design.

JAUNT ONE VR CAMERA

Jaunt ONE is a professional-grade camera system specifically designed for capturing high-quality stereoscopic 360º cinematic virtual reality experiences. Built from the ground up with visionary VR creators in mind, Jaunt ONE has proven itself in the hands of the world’s top filmmakers, studios, and networks.

Jaunt ONE offers industry-leading stereoscopic capture quality and a suite of tools for camera control and data management. It features 24 camera modules with frame-sync, global shutter, 10-stops of dynamic range, and custom 130º FOV lenses with a fixed f/2.9 aperture. Additional features include support for 120 frames per second capture and a live viewfinder. Jaunt ONE and its complementary workflow present content creators with an end-to-end solution for filming high quality cinematic virtual reality content.

Gear — Jaunt ONE VR Camera David Alexander Willis

First Look: Jaunt’s ‘ONE’ VR Light-Field Camera with Newly Revealed Specs

Introducing Jaunt ONE – Cinematic VR Camera – Jaunt One Demo Sydney by Staples VR

The Jaunt One is a professional grade, stereographic cinematic VR build from the ground up and designed with visionary VR creators in mind. Staples VR will put the camera through its paces and explain the good the bad and the amazing when it comes to the system. Client support to visualise, post production, time line cost, testing, consulting, access to equipment. and do well in collaboration.

Staples VR lead the way when it comes to live action 360 video and your content creation. What can you do with the technology? They have worked with clients to make high end quality VR including live action capture, gameified interactivity, photogrammetry, lidar scaning, installations, training, equipment resource, live streaming VR that can be mix in with prerecorded material and swapping between different camera systems. They have build a huge range of skills and techniques to get the most of your capture systems and post workflow for the entertainment, medical, architectural, OH & S, forensic training, military training, building installations, airlines, factories, education and telecommunication industries to name a few and are on the fore front of this rapidly expanding industry.

EXPERIENCE A REAL HOUSE FIRE THROUGH 360º VIDEO & VIRTUAL REALITY

New Zealand Fire Service today launched a world-first initiative – a 360 degree and virtual reality (VR) experience – Escape My House available online now. For the first time ever, the public can experience a real house fire first-hand and, along the way, learn why they need an escape plan.

LYTRO CIMEMA Imagine working on footage in visual effects and having the ability to change the depth of field or focal point of a scene on the fly. Change the frame rate and have physically accurate shutter angle that would have been appropriate for the rate. Reposition the camera in the scene. Drop 3D objects in your scene and have them properly occluded by the live action content. Pull mattes by setting a near depth and a far depth and extracting an object. Effectively, think of having many of the benefits of deep compositing for live action scenes.

Proof of Concept (PoC) is a realization of a certain method or idea in order to demonstrate its feasibility or a demonstration in principle with the aim of verifying that some concept or theory has practical potential. A proof of concept is usually small and may or may not be complete.

Photogrammetry is the science of making measurements from photographs. The input to photogrammetry is photographs, and the output is typically a map, a drawing, a measurement, or a 3D model of some real-world object or scene. Many of the maps we use today are created with photogrammetry and photographs taken from aircraft.

There are different camera systems with camera modules are getting smaller, some examples along the way are:

- optic cluster based systems where VR started, the GoPro rigs, mini bundle.

- cluster based, panoptic camera systems – multicamera face tracking system suitable for large wired camera networks

- mirror rigs with parabolic mirrors, larger cameras improving quality, expensive and difficult to use

- light field systems such as Lytro recording refractions and reflections of the entire area of space. Light field camera, also known as plenoptic camera, captures information about the light field emanating from a scene; that is, the intensity of light in a scene, and also the direction that the light rays are traveling in space. This contrasts with a conventional camera, which records only light intensity. Lytro is building the world’s most powerful Light Field imaging platform enabling artists, scientists and innovators to pursue their goals with an unprecedented level of freedom and control. This revolutionary technology will unlock new opportunities for photography, cinematography, mixed reality, scientific and industrial applications.

- volumetric capturing an area, volumetric video with large storage and at the moment no suitable way of playing it back.

Volumetric capture is the swift kick in the butt VR so desperately needs

Why Volumetric VR Is The Real Future Of Virtual Reality

JauntONE camera has 24 independent camera systems, resolution 8K, minimum 4K in a stereoscopic field of view. It uses every second camera module to give a slightly parallax view for the left and right eye giving a sense of depth and only playable back through head sets. Can shoot different frame rates up to 120 for slow motion which can only do 4K output. Can preview the camera system, change the ISO values, 12 volts, workflow with changing batteries and cameras. The parallax information becomes your depth map in the full 360 arc to incorporate in vfx elements.

Can get artefacts in the stitch line when something is close or further away from the camera, looking for the ability to stitch together with only one stitch line or as few as possible. Optical flow form of stitching, analyses every pixel of the frame and comparing to the frame before and after to track the movement to know what is moving and what is staying static. Not a perfect system, issues such as the algorithms not being able to recognise pixels from one camera to the next when things getting to close to the lenses within the minimum parallax distance, when two lenses cannot see the same material. The other one is motion blur, going to quickly through the frame. It is harder when the camera cannot see the image in its entirety is harder to get the stitch points. Shooting a higher frame rate, will give clearer pictures even if playing back at 30.

Needs to be level, stereoscopic around the middle and tapers off top and bottom. Assume the audience is on the same eyeline as the camera, if the audience choose to look at the skyline then you cannot control the angle the are looking at that object and will not be able to play back correctly. Create depth through the left and right eye and our brain focuses somewhere in the middle.

Some issues when considering which camera to use: safe distance from the camera 2.5 meters, not too fast moving images, camera moving to minimise and avoid haloing, artifacting, morphing, repeating textures, semi-transparent, moiré effect (are large scale interference patterns that can be produced when an opaque ruled pattern with transparent gaps is overlaid on another similar pattern. For the moiré interference pattern to appear, the two patterns must not be completely identical in that they must be displaced, rotated, etc., or have different but similar pitch.) Use 8 modules out of the camera, does not like single orbit.

It pulls up every camera module to see the placement, exposure and correct accordingly. The camera has up to 10 and 1/2 stops, the system can go up to 18 stops of dynamic range in a gradient across the system. Situations such as the camera placed between a window and action, you can expose correctly for skin tone and then for the window, outside exposure and the cameras in-between will incrementally so it works together accurately and doing multiple exposures.

RESOURCES

Miller the latest News and Promotions from Miller Camera Support Equipment.

BOOKS

Cinematography, Film Craft Series by Mike Goodridge and Tim Grierson

Framed Ink: Drawing and Composition for Visual Storytellers by Marcos Mateu-Mestre

AERIAL PHOTOGRAPHY

Near Map delivers high-resolution aerial imagery direct to your device, within days of capture.

Professional Aerial Photography or PAP, can perform all of your photographic needs. While we specialize in aerial photography using our state of the art drones.

Skyshots Low Level Aerial Photography Advantages

GREEN SCREEN

EP 1: MY PATH TO HOLLYWOOD – CINEMATOGRAPHY ONE ON ONE WITH STEVEN FIERBERG, ASC

In episode 1 of this 5 part series, award-winning cinematographer Steven Fierberg, ASC shares some personal history and insight into his journey from Detroit to Hollywood. Fierberg is best known for his work on the TV series’s “Entourage” and “The Affair.” His film credits include “Secretary,” “Love and Other Drugs,” and more.

One on One with Steven Fierberg, ASC – Episodes 1&2

“I always find I’m always excited! Everything’s different. Every day is different. Every set is different. The light is always different. I think one of the reasons I also wanted to go in into

this field was I felt like I would never know it – I would always be learning. There’s always something.” – Steven Fierberg, ASC

If you have only one takeaway from Light & Shadow, let it be a reminder that cinematography, like all art forms, is something that comes from within. The technical can be taught, and anyone can pick up a camera and learn how to operate it. To capture an emotion and move an audience through visual storytelling is the real challenge. The big question is this: can it be learned or are you born with it?

Capturing Digital Images (The Bayer Filter) – Computerphile

The ACS Victoria is proud to present this online Q&A event with esteemed cinematography Bonnie Elliot ACS, who has recently finished production on feature documentary ‘Freeman’ and mini series ‘Stateless.’

POOR MAN’S PROCESS Filmmaking Technique – Excellent Demonstration

Vimeo Staff Picks: Omote/Real-time Face Tracking and Projection Mapping

Lighting with one light.

Information about filming shots, cameras, lighting and composition.

The Changing Shape of Cinema: The History of Aspect Ratio

Robert Rodriguez – Ten Minute Film School

Cinematography with Richard Michalak Trailer

Cinematography Learn from a Master

Steady Feathers: LG G2 – The most extreme camera ever “Chicken”

Vertical Video Syndrome – A PSA