Metaverse: Open for business?

Virtual Production Primer | A look at the key terms and concepts involved in virtual production

XR

What Is XR, and How Is It Radically Transforming Industries?

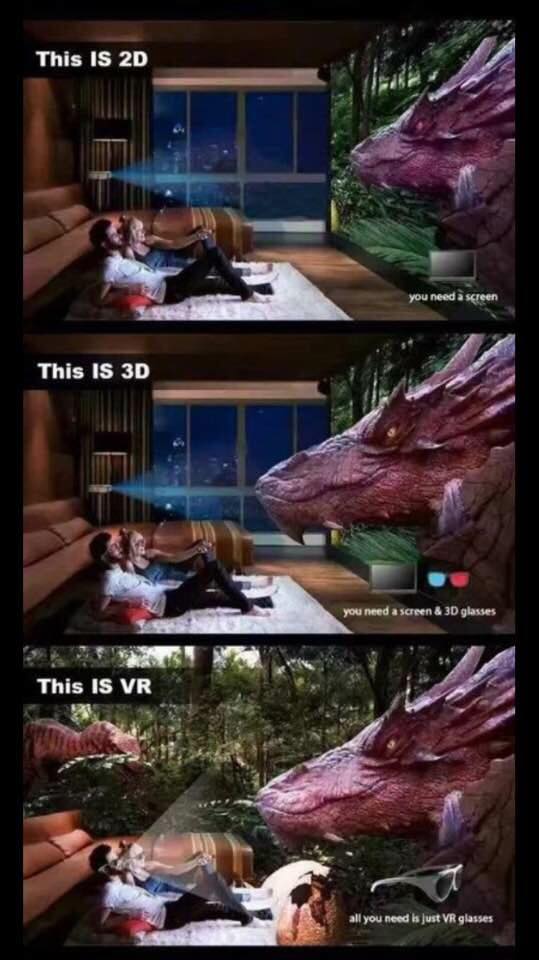

VR

VR VIRTUAL REALITY CINEMA MELBOURNE

-Sessions are generally 25-30 mins and you will see 4 shorts.

-Screens Local & International VR Film Makers Works.

-Runs collaboratively with Maggie Wren’s Art Space.

-Group & Private Bookings welcome.

-Special Events where we come to your function are available.

-Talk to us about hire and vr productions & solutions.

Info@virtualrealitycinema.com.au

Oculus Rift Vs. HTC Vive Vs. PlayStation VR

A Russian robotics firm is offering $US200,000 ($A280,000) to anyone who’s willing to forfeit the rights to their face and voice for use in its latest robot development.

Apple said to have ruled out a metaverse for its mixed reality headset

Who Will Be the First to Develop a True Hologram?

The Soloist VR: death-defying climbs shot with Insta360 Titan

Top trends to come out of FMX 2022

What Is Randonautica? The Bizarre App Is The Latest Craze On TikTok

As we’ve touched on before, de-aging is becoming more widely used within the VFX industry. With more than five films using the technique last year alone, one that stood out amongst the many was Weta Digital’s Gemini Man.

Their goal was to “finally cross the uncanny valley and bring to screen a totally emotive digital human”, Guy Williams, VFX Supervisor for Gemini Man, tells us. While we have seen digital reincarnations in films before, Junior—Will Smith’s digital counterpart—is one of a kind, as up until now no studio has attempted to create a wholly digital human cast in a leading role

PIXVANA

Meet Pixvana Purpose built to streamline how you share and present your content in VR. Pixvana is a Seattle software startup building a video creation and delivery platform for the emerging mediums of virtual, augmented, and mixed reality (XR).

BEHERE 360 Panoramas & 360 Degree

krpano Panorama Viewer

WHAT IS VIRTUAL PRODUCTION? Join us as we dive into what we learned from the first podcast episode of the new series from Unreal Engine. Ben Grossman calls today’s filmmaking the “real-time frontier,” this new age of film engineering where we’re no longer limited by reality or timelines. Sounds pretty trippy, right?

Solomon Rogers — Founder/CEO, REWIND

No one knows the virtual world like Solomon Rogers. From an academic background, Sol decided to found his own company, REWIND, to specialize in creating immersive and meaningful VR experiences for an impressive array of companies, including HBO, Lyft, Sony, and Microsoft.

Making Virtual Reality and Augmented Reality a Part of Remote Design Workflows

Maybe you’ve tried virtual reality technology that makes video game experiences more entertaining and impressive, or have seen how many retailers are using augmented reality to enhance online shopping experiences like furniture selection. But did you know that virtual reality (VR) and augmented reality (AR) are evolving the workflows of engineers and designers with immersive experiences that help them do their jobs faster and more effectively?

Storyboarding in Virtual Reality

Storyboards are commonly used in film, web and game design as a quick, effective way to communicate spatial position, sequence, motion and interaction when you’re trying to pre-visualize a scene. They’ve also been used to frame a scene, but what do you do when there’s no frame? Consider drawing panoramic having the ends are in the back of the viewer or point of focus.

‘Absolutely extraordinary’: The VR moment that astonished an Aussie movie legend

Beresford turns his head, looking down at the keys on the piano, and around at the other bar patrons ‘behind’ him, as the tuxedoed tenor warbles about the joys of booze and proffers a glass of champagne.

The main difference between game designers and game programmers is that game designers work on the creative side while game programmers develop code and address the technical aspects of video games. Both designers and programmers are in high demand.

How VR Training in the Workplace Is Transforming Learning on the Job

In this digital world, creation often starts on computers. But fashioning, shaping, and molding raw materials into a finished piece of hardware or a consumer product requires a dedicated worker’s skill. The same goes for erecting a building—some things simply can’t be done online.

Making Virtual Production a reality for independent films

The future of independent film production in a post-covid-19 world is taking “virtual” shape at Pace Pictures in Hollywood. The boutique facility recently used groundbreaking virtual production technology to produce Match, a feature-length romantic comedy from director Sean McGinly (Silver Lake, The Great Buck Howard). Actors were shot on a green screen stage and placed into virtual three-dimensional environments while incorporating complex camera movement and lighting. Most remarkably, says the company, “principal photography was accomplished in just five days and at a fraction of the cost of most conventional film productions.”

Virtual Production: Compositing will Survive

Once again Industrial Light and Magic rocks the world of visual effects with the production of The Mandalorian using the new virtual production technology. It has sent shock waves through the VFX community and worries many of my fellow compositors about the future of our jobs. After all, shots that would have normally been done as a green screen are now captured in camera without compositing. Over 50 percent of The Mandalorian Season 1 was filmed using this new methodology and this scares the pudding out my fellow pixel practitioners. As of this writing there are 10 virtual sets in production with roughly another 100 under way all around the world. Is this the end of our careers? Are we soon to be as obsolete as CRTs? Will industry standard visual effects tools like Nuke, Resolve, Silhouette or Mocha Pro go the way of the Dodo? The short answer is “no!” The long answer is “hell no!”

Thin Ice VR experience to premiere in Adelaide, post COVID-19 lockdown

Three years in the making, Thin Ice VR is a groundbreaking virtual reality production using world-first technology to retrace Sir Ernest Shackleton’s famous 1914 Trans-Antarctic expedition, highlighting the devastating impact of climate change over the past 100 years – and it will be airing later in 2021!

JUSTIN DENTON — ARTIST, WRITER AND DIRECTOR, BLUR STUDIO

It’s been five years since VR director Justin Denton last joined Chris on the CG Garage Podcast, and in that time he has truly pushed the boundaries of the medium. He’s produced jaw-dropping tie-in experiences for Ghost in the Shell, Mr. Robot and Legion. And he’s indulged his passion for Dickens’ A Christmas Carol via Chained: A Victorian Nightmare, which uses techniques from games and immersive theater to deliver a unique, personalized experience. Justin is an authority on how the VR industry has shifted and settled, and its uses as a tool and a medium. Meanwhile, in his new role as Staff Director at Blur Studio, he’s taking VR techniques into filmmaking with his exhilarating PUBG trailer. He also details his contribution to DD and Time Magazine’s project, The March, which takes participants into a VR recreation of Dr. Martin Luther King Jr.’s historic rally.

JENNIFER SEEVINCK

An electronic artist, researcher and lecturer in Interactive and Visual Design.

Panasonic at CES 2020: the world’s first HDR capable UHD VR eyeglasses

Panasonic Corporation partnered with Kopin Corporation to develop the world’s first High Dynamic Range(HDR) capable ultra-high definition(UHD) virtual reality (VR) eyeglasses which boasts a comfortable fit that makes users feel as if they were wearing eyeglasses. While conventional VR glasses with high-quality images and high sound quality provide users with highly immersive simulated experiences, these glasses tend to be big in size and require users to strap them to their head with a headband, which could cause wearer discomfort.

Google has shut down work on 3D painting app Tilt Brush, the latest in a string of VR disappointments

The tech giant acquired the firm behind Tilt Brush, Skillman & Hackett, for an undisclosed sum in 2015, praising its “innovative approach to 3D painting.” At one point, it hired Tilt Brush Artists in Residence.

How TIME Re-created the 1963 March on Washington in Virtual Reality

Tucked away in an office on a quiet Los Angeles street, past hallways chockablock with miniature props and movie posters, is a cavernous motion-capture studio. And in that studio is the National Mall in Washington, D.C., in 1963, on the day Martin Luther King Jr. delivered his “I Have a Dream” speech.

Exploring the Tomb of Nefertari: A 3D Walkthrough

Using 3ds Max, the walls of the once-forgotten tomb are explored in a 3D walk-through model, showcasing hieroglyphs, highlighting artifacts and examining history. Andrey discusses how he started his career in 3D modeling and what inspired him to create this marvelous and life-like piece.

Tech Insider – Fast Change 5G sets the stage for new AV opportunities, interaction

Alex Wallace, Verizon Media’s GM of news, entertainment, and studios summed up the impact 5G is going to have on the M&E industry—especially when it comes to increasingly personalized DOOH/AV (digital out of home/audio video) marketing applications—when he said, “No one knows where 5G is going to take us, but we want to be at the forefront of it,”

This blog was first published as a part of a newsletter from The Shindler Perspective, Inc. in January 2017 following CES. Mr. Case’s appearance on 60 Minutes on March 17, 2019 discussing his Rest of the Rest/Rise of the Rest initiative prompts its republishing as a blog.

Briefly, AOL and other early companies in the build out of the internet were the first wave; Google, Facebook and others represented the second wave. The third wave is all about extending the tentacles of the internet into all manner of existing businesses, the “rest,” from manufacturing and service providers to the creation and distribution of content across the world, effectively completing the connectio

Microsoft shows off keynote speaker hologram speaking a new language

During this week’s Microsoft Inspire conference in Las Vegas, the company demonstrated a new HoloLens technology developed in collaboration with Azure AI services that produces a full-size hologram of an individual speaking another language. A few days into Microsoft’s Inspire conference, the company presented a new HoloLens technology during a keynote that produced a perfectly replicated hologram version of the anglophone keynote speaker delivering the presentation in Japanese. The speaker and subject of the hologram was Microsoft Corporate VP Julia White, an executive working with the Azure AI team. Before the presentation, White was scanned at the company’s Mixed Reality Studio so that a hologram of her — outfit and all — could be replicated in front of the Inspire audience.

Dejero supports world’s first human hologram over 5G

Musion 3D, Dejero and Hawthorn have joined forces to deliver a spectacular 5G live experience for Vodafone Romania. Dejero provided EnGo mobile transmitters and receivers to deliver video links from a studio in Bucharest, from which the hologram of a 10-year-old guitarist was beamed live to a stage over a Huawei router and Vodafone 5G link

Google Researchers Develop Method for Capturing ‘Relightable’ Volumetric Video

As the demand for realistic volumetric video for AR experiences begins to grow (along with the available facilities and services for capturing it), researchers at Google have figured out how to improve upon the format.

Face Off The simulations are still clearly fake, but better versions are on the way. Deepfakes are manipulated and misleading videos that use deep learning technology to produce sophisticated doctored videos. Normally this involved splicing someone’s face onto an existing video, often making it seem like a celebrity or public figure did something that never happened.

Now the tools exist to do the same thing with someone’s entire body, like a digital puppeteer. That’s thanks to a new technique out of Heidelberg University that was recently published to Github and presented at September’s European Conference on Computer Vision — a step forward for deepfakes that has escaped mainstream scrutiny, but that could lead to the next generation of altered footage.

VIEW SHANGHAI with 24.9 BILLION PIXELS of QUANTUM TECHNOLOGY

This interactive view of Shanghai made with 24.9 billion pixels of quantum technology allows you to zoom in and out for amazing levels of detail and overview.

Microsoft’s partnership with Facebook Gaming could mean future VR support for Xbox

Earlier this week Microsoft revealed it was shutting down the Mixer streaming platform and partnering with Facebook Gaming instead. Could this potentially mean big things for Xbox VR support finally via a relationship with Facebook-owned Oculus?

In order to understand the significance of what this could mean for Xbox and for VR as a whole, it’s important to first look back and understand the past four years of broken promises and misleading marketing.

Westworld and The Walking Dead: VR experiences take you inside the TV series

Virtual Reality experiences based on movies and TV series are becoming common, and titles as those created by Los Angeles company Survios, take the experience to a whole new level.

Putting VR, Volumetric Video in Refresh Mode

The roadmaps are pretty clear, and the mass merchants are ready to push aggressively, even though folks are just slowly beginning to appreciate 4K … almost everywhere. But what’s around the corner, just over the hill? What will filmmaking look like in 5–10 years? At every film festival, there’s a corner of the lot roped off for the immersive future, VR (virtual reality). The mass entertainment market prophets keep telling us every year it’s going to be huge … in two years. Friends like Ted – a gaming industry expert – keep hollering, “It isn’t hot, sexy; won’t be and in fact it was stillborn.” Others, like Jon and Mark – who play endless hours of VR games (they’re testing the stuff), tell us it’s just getting better and better. Virtual pros don’t have much time to chat at film festivals; but at the IBC Future Zone, the cream of the crop will be able to focus on detailing what they have been doing to push the frontiers of creativity and technology to give us a dose of real reality.

Light Field Lab is making the stuff of sci-fi films a reality. As background, Light field images represent the mathematical model that defines the vectors of ray propagation. A light field capture system samples these rays of light to record a four dimensional representation of a given environment.

THE VIRTUAL REALITY CINEMA 178 JOHNSTON STREET COLLINGWOOD, MELBOURNE 3066 AUSTRALIA

Is a creative project aimed at showcasing the new wave of virtual reality works. Pushing the boundries of the medium and assisting experienced content creators to create VR works. By having a VR cinema space to screen, network with other vr creators. Virtual Reality is upon us, its a new frontier, a new medium. For the newcomers, VR is an immersive experience in which your head movements are tracked in a three-dimensional world, seen in a 360° perspective, thanks to mobile VR headsets. This cinema brings Australia’s New Wave of VR Creators who are pushing these new boundaries.

Some food for thought:

- VR/AR opportunity

- capturing sound for VR and AR

- editing and compositing strategies for spherical video (360º VR video) algorithms

- designing spatial sound: adapting contemporary sound design practices for VR

Virtual sets traditionally allowed of the inclusion of the talent into a virtual scene.

Create Concept Art for VR

One of the greatest projects I have had the opportunity to work on was TerraChi – A VR meditation experience. I worked collaboratively with a team as the lead concept artist. This project was mentored under the guidance of the UTS Animal Logic Academy. A lot of inspiration came from ancient Chinese temples, structures, and landscapes. My first task was to create initial sketches and concepts of what TerraChi could look like and be. And with help from colleagues, we set out to define the art style of the game.

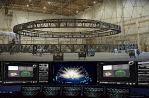

The world’s largest and most advanced volumetric video capture and creation facility: Intel Studios

Located in the heart of the entertainment industry in Los Angeles, Intel Studios features the world’s largest volumetric video stage and a comprehensive post-production and control facility powered by the latest technology. Intel has spent over a year building out the 25,000 square-foot studio with 10,000 square feet dedicated just for video capture in real time. To house all the data generated from shooting, there are 10 petabytes of local storage. With this process you can make 360-degree real-time video and choose exactly the perfect angle and picture frame you want to shoot.

Located in the heart of the entertainment industry in Los Angeles, Intel Studios features the world’s largest volumetric video stage and a comprehensive post-production and control facility powered by the latest technology. Intel has spent over a year building out the 25,000 square-foot studio with 10,000 square feet dedicated just for video capture in real time. To house all the data generated from shooting, there are 10 petabytes of local storage. With this process you can make 360-degree real-time video and choose exactly the perfect angle and picture frame you want to shoot.

Can you cut it?: an exploration of the effects of editing in cinematic virtual reality.

SIGGRAPH 2017: Virtual Reality Is Alive and Flourishing

SIGGRAPH 2017: Virtual Reality Is Alive and Flourishing

The SIGGRAPH conference is a year event that focuses on the latest in computer graphics and other interactive arts. It is a multidiscipline show, mixing computer imaging with other physical creations. It is also a crossroads for the film, video game, commercial, research and education industries. It has a forward-looking vision while celebrating the recent and not-so-recent past.

ARKit and ARCore are leaving VR in the rearview mirror

AR/VR has become a two speed market, with mobile AR set to have over twice the number of users at launch in 2017 than the entire AR/VR headset market by 2021. Apple ARKit, Google ARCore, and Facebook’s Camera Effects Platformcould have 900 million users by the end of 2018, with their launch changing the trajectory of the whole market. Digi-Capital has fundamentally revised the AR/VR market thesis, analysis and forecasts in its Augmented/Virtual Reality Report, Augmented Reality Report and Virtual Reality Report.

“Resident Evil 7: Biohazard” in VR – Reforming the Workflow

When you think of horror games, it’s almost impossible not to think of Resident Evil’s legacy. Capcom’s seventh Resident Evil title delivers the fear that its name promises but this time, in Virtual Reality.

We talked to the Resident Evil 7: Biohazard (RE7) Development Team, Mr. Kawata (Producer), Mr. Fukui (Technical Director) and Mr. Tsuda (Art Director) about overcoming the various challenges in creating RE7, the transition to physically-based rendering, the development of their company game engine and bringing the franchise to VR.

Front-line insights on VR gaming from world leading games studios and publishers

As virtual reality continues to make waves across industry, it’s gaming which remains the most exciting and promising area for achieving mass consumer adoption. Big publishers are investing and new titles are pushing the boundaries and giving consumers good reason to buy both hardware and games.

Ahead of VRX Europe 2018, we’ve gathered senior-execs from some of the world’s most forward-thinking game studios, to get insights on how they’re approaching game development and building towards mass consumer adoption of VR.

VR, AR and More at SIGGRAPH 2017

The beginning of August saw the 44 year old SIGGRAPH conference ( S pecial I nterest G roup on Computer GRAPH ics and Interactive Techniques) return to Los Angeles for the 11th time, and this year the topic was almost exclusively VR. As this is ProVideo Coalition and not VirtualReality Coalition, I’ll be taking a more filmmaker-centric approach to this recap. That being said, the word of the week was “immersion”, and at this point I’m confident we’re on our way to The Matrix within the next 5 years.

The beginning of August saw the 44 year old SIGGRAPH conference ( S pecial I nterest G roup on Computer GRAPH ics and Interactive Techniques) return to Los Angeles for the 11th time, and this year the topic was almost exclusively VR. As this is ProVideo Coalition and not VirtualReality Coalition, I’ll be taking a more filmmaker-centric approach to this recap. That being said, the word of the week was “immersion”, and at this point I’m confident we’re on our way to The Matrix within the next 5 years.

CG SOCIETY

- VR IS A FANTASTIC BEAST Framestore’s Andy Rowan-Robinson on building interaction and expanding narratives in virtual reality. The Evolution of VR Storytelling

- YOU GOT THIS: AN ANIMATOR-TURNED-VR CREATOR’S 5 TAKEAWAYS ON GIVING VIRTUAL REALITY A GO

MEET UP Sydney Augmented and Virtual Reality. We are Augmented Reality enthusiasts of all descriptions – technical, arts, sports, industrial, commercial, investors, entrepreneurs and simply any end user. There is a lot happening in this tech niche and in particular locally which beckons more co-ordinated face to face interactions and formal/informal meetup gatherings. Jump on board with us!

ALIVE: live-action lightfields for immersive VR experiences is an eighteen-month industrial partnership between Foundry and Figment Productions together with University of Surrey and funded by InnovateUK. The collaboration aims to develop the technologies required to capture live-action content as a fully immersive Virtual Reality experience, where the viewer has the freedom to move inside the content.

From dystopia to utopia: the new hyperreality Online shopping, led by Amazon, is hugely popular. But imagine being able to strap on a VR headset and ‘walk’ through those digital stores, getting all the best bits of visiting the shops without suffering the crowds of people or lugging around heavy bags. An obvious application for the medical industries is hyperreal training scenarios. Instead of operating on plastic models or cadavers, budding surgeons – or senior doctors updating their skills – could use VR or AR to retrace the steps of a recorded real-life surgery.

ON MIXED REALITY AND THE BRAVE NEW VIRTUAL WORLD

Our brains, which are themselves highly complex organic computers, collectively comprise this vast, great network. “Humanity.” Your body is essentially an organic bio-shell – a highly advanced haptic suit, designed specifically to allow it to fully experience the physical world in which it exists, through the five senses – sight, smell, touch, sound, taste – for the purpose of sending information to your brain from the time it develops in the womb, gathering, categorizing and storing data in a complex database deep within the brain where memory is stored.

What Google’s Blocks Could Mean for the Future of Virtual Reality Google introduced a new VR-based toolset called Blocks that will specifically allow developers to create and design 3D objects — for use with VR experiences — in their natural environment.

Spielberg warns VR will rule the future at Comic-Con Presenting his new film Ready Player One. Spielberg spoke about the challenges of adapting Ernest Cline’s book about a dystopian future where humans take refuge in VR after the world is destroyed by global warming.

Ana Serrano is the Chief Digital Officer at the Canadian Film Centre and Founder of CFC Media Lab, the world-renowned institute for interactive storytelling. We spoke with Ana who spoke at our 360 Vision event about her experiences with VR and what trends she’s noticed over the last year.

Human anatomy and limited technology: the barriers to truly immersive VR

How can we create genuinely convincing virtual reality? Virtual reality (VR) is on the verge of mainstream adoption, while augmented reality (AR) experiences have already begun to enter the public consciousness. What began as a niche is finally accelerating in its journey towards popular use.

How can we create genuinely convincing virtual reality? Virtual reality (VR) is on the verge of mainstream adoption, while augmented reality (AR) experiences have already begun to enter the public consciousness. What began as a niche is finally accelerating in its journey towards popular use.

Mimicking the human eye

The experience is also impacted by the current field of view (FOV) offered by first- and second-generation headsets. Our binocular vision makes the human FOV around 200° horizontally, but most headsets give a measly 110° – just over half of what we see in reality.

On the road to something special

We have a way to go before we reach true immersion in VR and AR content, with major developments in both software and hardware still to be made. The push towards non-linear, adaptive experiences is an exciting one, but it will all be undone if a slight movement of the head means the illusion is broken – or indeed if we all end up feeling sick halfway through.

Digi-Capital AR/VR Dealmakers invested over $800 million dollars in Q2 2017, after a quieter first quarter.

The now familiar pattern for AR/VR investment saw the highest volume of investments from pre-seed through Series A, with chunky later stage deals accounting for most of the dollar value in the quarter (Improbable over $500 million, Unity $400 million – reported as half investment and half secondary share sale by employees).

VR’s Healthcare Revolution: Transforming Medical Training

Recently, we partnered with Children’s Hospital Los Angeles (CHLA) to build a VR simulation that places medical students and staff in rare yet high-risk pediatric trauma situations where split-second decisions determine whether a patient lives or dies.

A New Way for Therapists to Get Inside Heads: Virtual Reality

SAN FRANCISCO — Dawn Jewell recently treated a patient haunted by a car crash. The patient had developed acute anxiety over the cross streets where the crash occurred, unable to drive a route that carried so many painful memories.

Two-Thirds Of Architectural Visualisation Professionals Will Use VR In 2018

After the success of the medium in 2017, next year will be a big one for virtual reality (VR) According to a new report released by Chaos Group, it’s not just videogames that will see a significant drive. Architectural firms are also jumping on board, with a significant push for VR visualisation.

WA producer heads to Antarctica for ground-breaking VR experience

Briege Whitehead of White Spark Pictures is packing her bags, soon off to lead a team to Antarctica, producing and co-directing a world-first one-off factual virtual reality (VR) project THE ANTARCTIC EXPERIENCE, filmed in the isolated continent.

Bipolar Studio Builds Award-Winning VR Experiences with EVO

People driven by the desire to explore unknown spaces can help bring incredible new ideas and innovation to light. That desire led Gevorg Karensky to found Bipolar Studio in 2015, and in a short time, the studio has accomplished a lot. For starters, they invented and patented the first VR stabilization tech. They also built the first nine-camera RED Dragon VR rig, partnered with Google to create a film for the Google Daydream headset launch, and won the Mobile Grand Prix at Cannes for their work on the New York Times VR campaign, where they gave away one million Google Cardboard headsets in an effort to enable journalists and filmmakers to bring their vision to a broader audience

MediaMonks bends reality with CARA VR

The Possibilities of Google Earth in Virtual Reality

18 VR IDEAS THAT COMMERCIALLY WORK FOR EDUCATION

The three crucial VR headset developments you don’t want to miss

How will we know when VR has truly ‘made it’? By most measures, this will be when the technology has cracked the mass consumer market: when it can be found in homes and public spaces across the world. As we’ve explored before however, we’re a long way off from that point. To get there, we’ll need to see a marked jump in the level of immersion a user can experience in VR.

Magic Leap finally shows off its massively hyped mixed reality headset

Magic Leap finally shows off its massively hyped mixed reality headset

A blend of AR and VR that theoretically provides the best of both worlds — for the better part of a decade, and with over a billion dollars in funding, the pressure is on to show some results sooner rather than later. To that end, the company finally revealed its first actual product today, the Magic Leap One, and it looks insane.

VR TERROR: How to scare your players behind the headset

With graphics quality at an all-time high and with the official arrival of VR, the horror genre has never been more terrifying. But any good horror creator knows that the key to making a frightening experience is in the execution. We talked to a few horror developers to get some insight on creating the ultimate, terrifying VR game.

What they don’t tell you about 360° VR production

Will VR fundamentally change the way filmmakers approach their craft?

“VR journalism should focus on storyliving, not storytelling” and other insights from Google’s new VR study

Working with 360° Content

How HBO created a Westworld VR experience that lets you step into the show

LAST CALL SPINS MORE VR TALES FROM THE GALAXY’S EDGE

Sony reveals first images of redesigned PlayStation VR2

AR/VR startups raised $3 billion last year led by a few industry juggernauts

Tech companies working with augmented reality and virtual reality technologies raised more than $3 billion in venture funding in 2017. This data comes from analytics firm Digi-Capital and suggests that while the buzz surrounding the AR/VR space has tapered off, the sheer amount of cash getting pumped into the industry is continuing to surge.

We’re at the virtual reality tipping point… even if the headsets are still bulky and awkward

In the early 1990s, many people gawked at ugly and bulky mobile phones, unable to see just how much they’d change the face of communication. In this piece, Kaga Bryan argues we’re at the same point now with virtual reality, and he’s having a bit of déjà vu.

CONSUMER TECHNOLOGY ASSOCIATION

Majority of VR users say it will revitalise media, education, work, social interaction, travel and retail.

Seven out of 10 early adopters of virtual and/or augmented reality hardware have bought into the buzz and firmly feel that the technology is set to disrupt media, education, work, social interaction, travel and retail.

Using Virtual Reality to Treat PTSD

A documentary about a clinic in Los Angeles that uses virtual reality simulations to treat war veterans with PTSD. Hosted by a former master sniper for the Canadian military, this documentary short explores the efficacy of these systems to treat a still-mysterious mental condition.

THE MOLECULE ON INVISIBLE

VR’s first scripted series sheds new light on medium’s unknowns by April Robinson

The Molecule, says CEO Chris Healer, is very good at solving unique challenges. So when 30 Ninjas came calling with a scripted VR series to be directed by its principal, Doug Liman (The Bourne Identity, Edge of Tomorrow) and shot by Jaunt VR, Chris and his team were all in. In his words, “It made so much sense.”

Based on a screenplay by Julina Tatlock and Oscar-winning screenwriter Melisa Wallack, the mystery series centers on an heiress’ quest to guard her recently deceased grandfather’s estate and the family’s supernatural secrets. The story, told over five, roughly six-minute episodes, marks the first attempt at using virtual reality as an episodic-friendly, storytelling format while challenging many of VR’s current known truths.

SCREEN NSW videos from our virtual reality event: 360 Vision – Virtual Reality Lab

Check out our six new videos with screen producers and VR pioneers sharing their thoughts on where VR is heading, what the future could look like and what could make the next great VR project, now available for your inspiration. The videos come from our 2016 virtual reality event, 360 Vision, which was presented by Screen NSW, ABC, Screen Australia, Event Cinemas and AFTRS. The videos capture the conversation from the day and include talks by Collisions creator and 360 Vision Mentor Lynette Wallworth, Rose Troche: internationally renowned director, producer and screenwriter, Ana Serrano, Gabo Arora and Barry Pousman to name a few.

Predicting a Film In VR: Behind the Scenes

The Making of a Virtual Film for Architecture and Real Estate

Deep Video Portraits – SIGGRAPH 2018 A break down of how deep video portraits work from SIGGRAPH 2018.

Virtual reality films are fun to watch, but there’s a ton of work that goes into creating them. We sat down with Here Be Dragons to understand the effort behind their films. Watch the behind the scenes video here.

ABC Creates Immersive Virtual Reality Experience

BLENDERSUSHI / AN 360 VR Gallery (LIVENODING227)

ADOBE

Building intuitive AR experiences that matter with the Smithsonian, the Hydrous, and Adobe Aero

Augmented reality. Now more of a reality.

Premiere Pro offers support for viewing VR video in the Monitor panels.

It also detects if the clip or sequence has VR properties and automatically configures the VR viewer accordingly. You can publish VR video directly to the web from Premiere Pro to sites such as YouTube or Facebook.

Apple is working on an AR headset with a new OS, report says

Bloomberg reported today that Apple is working on an augmented reality headset that it hopes to bring to market in 2020. The headset would feature its own display rather than relying on an iPhone, and it would run a new spin-off from iOS in the vein of watchOS or tvOS, currently called rOS internally, for “reality operating system.”

AUTODESK

Daryl continues to add visual complexity to the environment in his VR creation. He shares a workflow using Arnold to get image-based lighting onto tenure maps so they can be layered on top of the reality captured environment. Follow along on the Journey to VR blog as Daryl builds his first VR experience.

- 3D to VR: The Essentials. We’re kicking off our journey from 3ds Max to VR, and we want you to come along. Join us for a daily video tutorial as we walk you through the fluid workflow of 3ds Max’s powerful 3D animation tools combined with a new interactive toolset. 3ds Max 2018.1 features 3ds Max Interactive, a real-time engine that extends the power of Max with tools to create interactive and virtual reality (VR) experiences.

- Reforming the Workflow Resident Evil 7: Biohazard in Virtual Reality. We talked to the Resident Evil 7: Biohazard (RE7) Development Team, Mr. Kawata (Producer), Mr. Fukui (Technical Director) and Mr. Tsuda (Art Director) about overcoming the various challenges in creating RE7, the transition to physically-based rendering, the development of their company game engine and bringing the franchise to VR.

-

Virtual Production to VR with CBS Digital Craig Weiss and Jim Berndt of CBS Digital talk about creating the Stranger Things VR experience, and the convergence of virtual production and VR.

Keynote: Augmented Reality to Virtual Reality – from patient care to surgical simulation

VR Dreaming: hopes and predictions for where virtual reality is headed in 2017

You got this: An animator-turned-VR creator’s 5 takeaways on giving virtual reality a go

AUTODESK UNIVERSITY

VR, AR, and the cloud were definitively top-of-mind for design visualizations artists and architects at this year’s event. Want to watch a missed class?

MEL Chemistry VR

The Now and Future of Virtual Reality for Design Visualization

VFX Workflows for Architectural Visualization

Procedural PBR Material Creation Using Substance Designer for Visualization

Positioning and Marketing a Skyscraper – 432 Park Avenue

Render Like a Photographer

BLACKMAGIC

Blackmagic eGPU Optimises Performance for Resolve, Games and VR Blackmagic Design’s new eGPU is a high performance graphics processor optimised for professional video and graphics, such as those used in DaVinci Resolve software, 3D gaming and VR packages. The eGPU was designed in collaboration with Apple to improve graphics performance and accelerate computational tasks. It has a built-in AMD Radeon Pro 580 graphics card, two Thunderbolt 3 ports, HDMI 2.0 and 4K output, 85W of charging power and four USB 3.1 connections.

THE FOUNDRY

- A round-up of the latest in VR, AR and MR

- Augmenting reality in the AEC industries

- A hyperreal history: the evolution of hyperreality

- Architect 2.0: How technology is driving the industry

- A deep dive into Virtual Production workflows

- Bridging the uncanny valley: what it really takes to make a deepfake

- Be transported into new virtual worlds with AR portals

- The Big Interview: Ben Grossmann, co-founder and CEO of Magnopus

- Can you afford to miss the virtual train?

- Deep inside DNA VR – London’s first VR arcade

- Exploring infinite walking in virtual reality

- Explaining deep learning: what it is, and what it’s not

- Exploring VR’s accessibility problem

- FAME: film-quality augmented and mixed reality experiences

- Foundry Trends’ VR Jargon Buster

- Five of the top trends to come out of SIGGRAPH 2020

- Five of the biggest trends from FMX 2019

- From Russia with agility: The studio pioneering a new way of working

- Galvanized Souls get the 360° treatment

- Human anatomy and limited technology: the barriers to truly immersive VR. Virtual reality is on the verge of mainstream adoption, but we’re a long way off experiences realistic enough to be indistinguishable from real life. So how can we create genuinely convincing, immersive VR?

- How design visualisation and VR will transform the AEC industries

- How mixed reality is sending us across the solar system

- How the AR Cloud will transform immersive technology

- Hyperreality: the new trend you need to be ready for

- Is enterprise the key to unlocking VR’s potential?

- Kanova: flexible VR sculpting

- Life, death and VFX: how Gulliver Studios helped create Squid Game

- Making the impossible possible with virtual production at Imaginarium Studios

- Mixed reality: the future of AR devices?

- Mixed reality: the gateway to the mirrorworld

- Modo VR: fully immersive design content creation

- More real than real: creating a feeling of ‘presence’ in VR

- Meet the company transforming real-time content creation

- Near Real-Time: Virtual Production with Nuke on-set

- Meet the creators: content creation in the virtual age

- Our new initiative to help you embrace VR

- Peeling back the layers of the virtual production onion

- Project EIST: bringing the beauty of choice beyond media and entertainment

- Q&A with Important Looking Pirates: the studio pivoting to virtual production

- Reaching true VR immersion: one blink at a time

- The three crucial VR headset developments you don’t want to miss

- This is why eye-tracking in VR is about more than foveated rendering

- The unexpected way VR could end up in your home

- Virtual worlds and adaptive light-fields: an interview with Disney Research

- Virtual reality as a social experience

- VR: It’s time for a reality check

- VR’s Eastern Promise: the growth of virtual reality in the Far East

- VR? AR? MR? Sorry, I’m confused.

- Virtual reality: what drives multiple hype waves?

- Walking in the footsteps of war survivors with virtual reality

- What they don’t tell you about 360° VR production

- What the dawn of the 5G era means for immersive technologies

- What’s new in virtual production?

- Why the founders of London’s first VR arcade have bet on VR going places

- Working in harmony: Augmented Reality and Machine Learning

- Adaptively sampled distance fields (ADF)

- Degrees of freedom (DOF)

- Field of view (FOV)

- Field of view adaptive streaming (FOVAS)

- Focal Surface Displays

- Foveated rendering

- Full immersion virtual reality (FIVR)

- Haptics

- Social VR

- Volumetric capture

Virtual reality plug-in toolset for NUKE

CARA VR™—the much-anticipated new plug-in toolset for the NUKE® family of compositing, editorial and finishing products—helps you to create incredible live-action virtual reality content.

With a specialised toolset that includes headset review, CARA VR dramatically speeds up the challenging and often tedious process of stitching and compositing 360° video footage, so you have more time to focus on creating high-quality immersive experiences.

ADF technology is a way of representing a shape in 2D or 3D that can significantly improve and speed up the ability to manipulate that shape. Giving you much more efficiency and power – you can zoom in and to levels of data or scale not previously possible, because the operations carried out on ADFs are computationally much more efficient. You’re sculpting using the algebra built over the ADF, rather than pushing millions of polygons around. The power to zoom in and create intricate details, or zoom out and work at huge scales, means artists can go way beyond knocking together basic designs for experimentation or fun. They could feasibly complete very intricate or very large-scale work to a professional standard, fast.

REAL TIME

REAL TIME GRAPHICS

Could you introduce yourself? I’m Matt Swoboda, the founder and director of Notch, a visual creation tool that works entirely in real-time. Notch technology powers visuals for acts from U2 to Beyoncé and Ed Sheeran, Eurovision, The Brits and numerous music festivals worldwide. Notch is a solution for artists and producers working in live events, interactive installations, mixed reality production, virtual reality, and motion graphics. Real-time isn’t just about pumping out frames faster, it changes the creative process completely. If a creative can see the full and final result of a render in real-time it changes the way they think when creating content. The iteration cycle moves to zero.

FACEBOOK CAN MAKE VR AVATARS LOOK—AND MOVE—EXACTLY LIKE YOU

For years now, people have been interacting in virtual reality via avatars, computer-generated characters that represent us. Because VR headsets and hand controllers are trackable, our real-life head and hand movements carry into those virtual conversations, the unconscious mannerisms adding crucial texture. Yet even as our virtual interactions have become more naturalistic, technical constraints have forced them to remain visually simple. Social VR apps like Rec Room and Altspace abstract us into caricatures, with expressions that rarely (if ever) map to what we’re really doing with our faces. Facebook’s Spaces is able to generate a reasonable cartoon approximation of you from your social media photos but depends on buttons and thumb-sticks to trigger certain expressions. Even a more technically demanding platform like High Fidelity, which allows you to import a scanned 3D model of yourself, is a long way from being able to make an avatar feel like you.

What went into creating Vincent, a hyper-real digital human

Until very recently, directly mapping an actor’s performance onto photorealistic digital humans using real-time rendering was considered impossible. We’d seen the likes of Avatar and Alita in the movies. Still, these involved time-consuming offline rendering and post-processing work.

But thanks to the advancements in graphics hardware and software, as well as the relentless hard work from innovative teams in the field, we’re seeing real-time rendered digital humans.

Meet Vincent, a digital human born from Korea-based creative studio, Giantstep. You can meet the creators at Pause Fest 2020.

Creating digital humans with Nuke and Katana

As we’ve touched on before, de-aging is becoming more widely used within the VFX industry. With more than five films using the technique last year alone, one that stood out amongst the many was Weta Digital’s Gemini Man.

Their goal was to “finally cross the uncanny valley and bring to screen a totally emotive digital human”, Guy Williams, VFX Supervisor for Gemini Man, tells us. While we have seen digital reincarnations in films before, Junior—Will Smith’s digital counterpart—is one of a kind, as up until now no studio has attempted to create a wholly digital human cast in a leading role.

The Rise of Real-Time Digital Humans: Pulse Panel.

This week fxguide’s Mike Seymour hosted an Epic Games panel discussion on the rise of real-time digital humans. In addition to fxguide, Mike Seymour is a longtime researcher and writer on digital humans. Mike was joined by Jerome Chen of Sony Pictures Imageworks, Amy Hennig of Skydance Media, Isaac Bratzel of Brud (makers of virtual influencer Lil Miquela), and Vladimir Mastilović of 3Lateral which is now part of Epic Games.

Real-Time Live Preview: SIGGRAPH 2021

Real-Time Live! is an attendee-favorite, one-night-only event that spotlights the most innovative real-time projects of the past year. Virtual attendees can watch some the world’s most original, jury-reviewed, interactive projects of 2021. This year’s live event showcases an incredible sample of the latest in real-time, and if you watch Live, you can also vote for this year’s Audience Choice winner.

REAL TIME MIKE

SIGGRAPH 2017: Real-Time Facial Capture Moves Forward With ‘Meet Mike’

SIGGRAPH 2019 to Tackle Facial Animation, AI, Ethics in Games, Showcase Future of Real-time Production in L.A.

Meet Mike at SIGGRAPH 2017

Behind the scenes of #MeetMike fxphd

Speaker: Mike Seymour, fxGuide

Mike Seymour talk about the presentation he gave @ SIGGRAPH 2017 in Los Angeles earlier this year. MEETMIKE showcases the latest research in digital human technology, with leading industry figures interviewed live and in real-time by a photo-realistic avatar in a ‘virtual set in Sydney’, presented at the VR Village at SIGGRAPH 2017 in Los Angeles.

Mike Seymour talk about the presentation he gave @ SIGGRAPH 2017 in Los Angeles earlier this year. MEETMIKE showcases the latest research in digital human technology, with leading industry figures interviewed live and in real-time by a photo-realistic avatar in a ‘virtual set in Sydney’, presented at the VR Village at SIGGRAPH 2017 in Los Angeles.

Speaker: Mike Seymour, fxGuide. #MEETMIKE

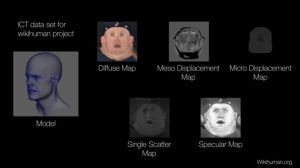

Producing a digital human in CG has been the ‘Manhattan’ project of computer graphics, it is both extremely difficult and has wide ranging implications both commercially and ethically. Digital Actors, Agents and Avatars are all very hot topics, but what few in the industry anticipated is how rapidly this would move from the issue of rendering a high quality human, to being able to do so in real time. Come and see MEETMIKE at ACM Siggraph ANZgraph were Mike Seymour will explain the project first shown that SIGGRAPH 2017 in LA that aimed to not only produce a realistic human but to do so at 90 frames a second in stereo in VR. Beyond realtime at even 30 fps (30 milliseconds), this International team has to render each frame in just 9 Miliseconds. To produce such fast results, the team deployed Deep Learning AI for a markerless facial tracker and solver, and used a custom build of Epic Games UE4.

In this worldwide first, each day of the SIGGRAPH show digital Mike met digital versions of industry legends and leading researchers from around the world. People such as Dr Paul Debevec, Tim Sweeney, Oscar Winners Ben Grossman (Magnopus) and Wayne Stables (Weta) and leading researchers from places such as PIXAR’s Christophe Hery, Glenn Derry (Fox).

Together they met and conducted interviews in “Sydney” via virtual reality which was watched either in VR or on a giant screen.

This project is a key part of a new research project into virtual humans as Actors, Agents and Avatars. The ANZGraph session will provide valuable data and insights for taking digital humans research to the next level, and share lessons learnt from the collaboration of R&D teams from around the world. MEET MIKE researchers span four continents, three universities and six companies including Epic Games, 3Lateral, Cubic Motion, Loom.ai and the Wikihuman global research project. The project aimed to explore best of class scanning, rigging and real-time rendering. Please note that this project aims to give away nearly all the data for non-commercial use and is a non-profit research effort.

Digital humans are a new form of computer human interface, the computer has a face that reacts emotionally, exhibiting matching and using affective computing or artificial emotional intelligence.

People like faces, emotion that is face to face, facial contact, live, immediacy, interactivity. We think of people, our identity as faces. The face is giving us the emotional context of what is going on, we care about it a lot.

Give the computer spaces and emotions, start having a thing we can interact with and to do this we need some AI and Deep Learning. Think about things that are interactive. Want to do a realistic person.

Mick also spoke about Matching and Mirroring – Remember that people tend to like people who are like themselves. You will tend to like people who are like you, I will tend to like people who are like me. The most important key to gaining instant rapport with another individual therefore is to make ourselves like them. One way that we can do this is to match and mirror their words (7%), tonality(38%) and physiology (55%).

Affective computing is the study and development of systems and devices that can recognize, interpret, process, and simulate human affects. It is an interdisciplinary field spanning computer science, psychology, and cognitive science.[1] While the origins of the field may be traced as far back as to early philosophical inquiries into emotion,[2] the more modern branch of computer science originated with Rosalind Picard‘s 1995 paper[3] on affective computing.[4][5] A motivation for the research is the ability to simulate empathy. The machine should interpret the emotional state of humans and adapt its behavior to them, giving an appropriate response to those emotions.

Uncanny valley is a hypothesized relationship between the degree of an object’s resemblance to a human being and the emotional response to such an object. The concept of the uncanny valley suggests that humanoid objects which appear almost, but not exactly, like real human beings elicit uncanny, or strangely familiar, feelings of eeriness and revulsion in observers. Valley denotes a dip in the human observer’s affinity for the replica, a relation that otherwise increases with the replica’s human likeness.

Uncanny valley – if you do not get it right, you can tell it is fake and in a film that matters.

Deep learning (also known as deep structured learning or hierarchical learning) is part of a broader family of machine learning methods based on learning data representations, as opposed to task-specific algorithms. Learning can be supervised, partially supervised or unsupervised. Some representations are loosely based on interpretation of information processing and communication patterns in a biological nervous system, such as neural coding that attempts to define a relationship between various stimuli and associated neuronal responses in the brain. Research attempts to create efficient systems to learn these representations from large-scale, unlabeled data sets. Deep learning architectures such as deep neural networks, deep belief networks and recurrent neural networks have been applied to fields including computer vision, speech recognition, natural language processing, audio recognition, social network filtering, machine translation and bioinformaticswhere they produced results comparable to and in some cases superior to human experts.

Synthetic data is “any production data applicable to a given situation that are not obtained by direct measurement” according to the McGraw-Hill Dictionary of Scientific and Technical Terms; where Craig S. Mullins, an expert in data management, defines production data as “information that is persistently stored and used by professionals to conduct business processes.” The creation of synthetic data is an involved process of data anonymization; that is to say that synthetic data is a subset of anonymized data. Synthetic data is used in a variety of fields as a filter for information that would otherwise compromise the confidentiality of particular aspects of the data. Many times the particular aspects come about in the form of human information (i.e. name, home address, IP address, telephone number, social security number, credit card number, etc.).

The Wikihuman project is a collaborative by the members of the Digital Human League to advance the study of digital humans. For more information on the Wikihuman project, please visit their blog at wikihuman.org

Chaos Group – We would like to officially announce the wikihuman.org site. This is the start of an ongoing project by the Digital Human League (DHL). Our mission for this project is to study, understand, and most importantly share our knowledge of digital humans.

alSurface Shader Wikihuman project By Mike Seymour The “al” in alSurface refers to Anders Langlands (right), a VFX sequence supervisor currently at Weta Digital, who wrote a series of shaders for Arnold.

Surface roughness often shortened to roughness, is a component of surface texture. It is quantified by the deviations in the direction of the normal vector of a real surface from its ideal form. If these deviations are large, the surface is rough; if they are small, the surface is smooth. In surface metrology, roughness is typically considered to be the high-frequency, short-wavelength component of a measured surface. However, in practice it is often necessary to know both the amplitude and frequency to ensure that a surface is fit for a purpose.

Woody between 1995 and 2017.

BabyX v3.0 Interactive Simulation Official

IBM Watson presents Soul Machines, LENDIT Conference 2017 (Professional Camera)

Some interesting information:

Emotions, immediacy, we care about faces, smiling and the effects on a digital person.

Creating an optical flow for the merge between the two expressions with no texture loss, blend shapes on his face and needing something to drive it. Fluid simulation using training data, learnt from an example, we can make the data. Using synthetic and training data for more powerful interactions creating a nighter affinity, it is more trustworthy. Get the points between the smile and the frown and the computer can reconstruct the points between them.

Look at the pores of the skin, the Diffuse, Specular and Normal Map and line up the textures for a smile and not a smile. Higher resolution specular maps for the face to be real. Change the pings off it to read the surface texture.

VR, no 30fps, 90fps stereo vertically because of our noses. UV lighting. 75% of the geometry went on the hair.

A sneak peek at MetaHuman Creator: high-fidelity digital humans made easy

IMERSIVE ENVIRONMENTS

The head is designed to place the nodal point of the lens directly over the pivot point the tripod to increase the effectiveness of the stitching software.

Use manual for Exposure, White Balance, Focus as conditions change while capturing sequences of images needed to make up a 360-degree panorama, taking readings from both shadows and highlights before selecting an average exposure setting or one that preserves important highlight or shadow detail. this will help to ensure consistency of colour, focus and exposure throughout the whole of the picture sequence. Overlap the edges from between 10% and 40 %. Can be output from the stitching program as a flat JPEG or Tiff file or an interactive panoramic movie.

If the lighting is difficult or there are moving items in the scene, shoot twice as man frames, usually by going around twice, with bracketing if appropriate. Panos are often taken with fixed values for exposure.

CYLINDRICAL PANORAMAS

One way to capture a wide-angle view of the world is to use a wide-angle lens. However, very wide-angle lenses are expensive, and no lens is wide enough to capture a full-surround (360-degree) view. But as most photographers know, you can instead capture a sequence of images, rotating the camera between views, then stitch them together using Photoshop or another tool.

MULTI-NODE PANORAMAS

Where viewers can jump from one viewpoint to another place in the scene. Viewers can move from one scene to another. Click on successive nodal points it is possible to view a range of angles and viewpoints.

QUICKTIME VR (QTVR) Authoring Studio

Apple® QuickTime® VR Authoring Studio software lets you create interactive virtual-reality scenes with point-and-click simplicity. It takes full advantage of the intuitive Mac® OS interface to help you easily turn photos and computer renderings into attention-getting 360-degree views. QuickTime VR Authoring Studio is a powerful one-stop solution for producing all kinds of QuickTime VR content.

It is an image file format developed by Apple Inc. for QuickTime, and discontinued along with QuickTime 7. It allows the creation and viewing of VR photography, photographically-captured panoramas, and the exploration of objects through images taken at multiple viewing angles. Supported up to the discontinued QuickTime version 7, QuickTime VR functions as a plugin for the standalone QuickTime Player, as well as working as a plugin for the QuickTime Web browser

VR PRODUCTION

VIRTUAL REALITY The Technology Behind Virtual Reality: Coding and Design

This link is a special inclusion requested by Girl Scout, Katie Miller who contacted me after finding some of the information on this web page helpful for her Junior Digital Game Design Badge. The VR Coding Design web site is part of the resources they found and used by her Girl Scout troop’s work towards their badge. It covers the most popular programming languages for VR and everything you need to get started for developing virtual reality games. Virtual Reality has also been incorporated into Katie’s school curriculum. I was a Guild Guide myself in my youth, not admitting my age here and thought this was wonderful and very excited to include as part of these resources. I went looking for information and found this: Girl Scouts at Home: Juniors – Digital Game Design Badge

VIDEO GAMES and Such Things

Working as a Senior Concept Artist in Game Development

Designing for Impact: Illustrating and Branding a Climate Awareness Escape Game

Gears of Time: Creating an Isometric Action Game with Time-Bending Mechanics

Duskveil: Creating A Game-Ready Expansive World in UE5

From Aspiring Artist to Studio Co-Founder: A Journey in Game Development

The Process Behind the Creation of a Video Game: Steelsilk Championship, Part II

Getting Into Games – PlaySide Developers on Working in the Industry

Process for Creating a Game Ready Character

Huge respect to the creator of the EVO 2023 fightstick so unwieldy they had to carry it around on their back

Developing and Designing an 2D Co-op Puzzle Platformer

Microsoft is bringing AI characters to Xbox

Microsoft is bringing AI characters to Xbox

Microsoft is bringing AI characters to Xbox

League of Legends 2022

What You Need to Know About Working in Game Development with Ryan McMahon from PlaySide Studios

The Generative AI Revolution in Games

5 Ways Artificial Intelligence Will Revolutionize Game Development

‘Cult of the Lamb’ cleans up at Game Developer Awards while SAFC-supported games shine at PAX

So You Wanna Make Games?

The Game Developer’s Resource Guide

Amazon just introduced a bizarre metaverse-like game to train people how to use AWS

GUERRILLA GAMES HEADS INTO HORIZON FORBIDDEN WEST

The Switch is now Nintendo’s best-selling home console eve

Bigger than Netflix: Video game content is the new king

How Xbox outgrew the console: inside Phil Spencer’s multi-billion dollar gamble

The 8 Generations of Video Game Consoles

Video games have come a long way since Pong wowed the world in the early 1970s. With the launch of the Sony PlayStation 5 and Xbox Series X, the ninth-generation of games consoles is now upon us. To find out how we got to this point, here is a brief history of consoles as seen on the BBC.

Jon Favreau and Wevr create emotional connections with VR game Gnomes & Goblins

When visionary film director Jon Favreau saw the award-winning VR ocean experience theBlu, he was inspired to create his own VR project, Gnomes & Goblins. To pull it off, he turned to director of TheBlu Jake Rowell to act as Director and Executive Producer, and to interactive software studio Wevr, co-founded by CEO Neville Spiteri.

Cyberpunk 2077 modders are fixing up the game

Cyberpunk 2077 launched full of issues both big and small, but the mod community settled down and went to work. The game’s Nexus Mods page is now full of interesting additions that smooth the path through the base game’s bumpy ride.

Among Us was 2020’s most downloaded mobile game

In 2020, more mobile users downloaded Among Us than any other game worldwide. Data released by Apptopia ranks the year’s breakout hit at 41 million downloads in the US and 264 million downloads worldwide — beating out games such as PUBG Mobile and Roblox, which both remain in the top 10 highest-grossing games of the year.

Dejero Helps Delivers Remote Gameplay Solution

Dejero has provided the NBA 2K League with a variety of remote connectivity equipment, via Defacto Entertainment and facilities partner Dome Productions, to help the professional esports league save its third season threatened by the ongoing COVID-19 pandemic that prevented its 23 teams from gaming together in the league’s new Manhattan studio. The esports league shifted its entire season 3 production from a studio-based model to a large-scale pan-North-America remote gameplay production model in record time, with the help of Dejero.

New Zealand is put on display in ‘the perfect vehicle’ of a video game

Tourism New Zealand is offering explorers holed up at home the opportunity to explore the country through a campaign film that takes the shape of an open world-style video game. The ‘game’ was revealed in a press conference held on live streaming platform Twitch, inviting the media to watch a walkthrough of Play NZ hosted by gaming influencer Loserfruit.

In the Spotlight: Rita | The ANZ Indie Showcase 2024

MEDIEVAL GAME ENVIRONMENT: CREATING ATMOSPHERIC TERROR IN REALTIME

Welcome to the Medieval Game Environment, an immersive, playable experience set in an eerily serene, remote village, available for free download on the Unreal Engine Marketplace. As a player, you can explore this interactive demo and take in the sights and sounds of a fully dynamic, high-fidelity environment rendered in realtime using the Quixel Megascans ecosystem inside of Unreal Engine.

10 Game Design Tips To Accelerate Your Career

In a few short decades, video games have grown from the hobby of a few to a global market worth $152.1 billion. As they’ve become more advanced, game design has evolved into an exciting career path offering unprecedented scope for creative expression through exciting storylines, compelling visuals, and mesmerizing sound.

Learn how to design your own video game for free

“Learn to code.” It’s been the mantra of the past decade, and for many creatives, a daunting or even pointless prospect. Where do you even start? And who has the time? Well, sheltering in place, and a little help from Unity—the world’s most popular video game engine—may provide these answers!

Omni Adventures

This epic project took a year of intensive concept design and hands-on 3D development. It all started with a trip to Mexico to research the Mayan ruins of Chichen Itza (to take hundreds of photographs and hours of video to document the site), and was followed by months of 3D work in Cinema 4D, painstakingly recreating the entire site and other archaeological locations from all over the globe.

ACTIVEWORLDS

Active Worlds is an online virtual world, developed by ActiveWorlds Inc., a company based in Newburyport, Massachusetts, and launched on June 28, 1995. Users assign themselves a name, log into the Active Worlds universe, and explore 3D virtual worlds and environments that others have built. ActiveWorlds allows users to own worlds and universes, and develop custom 3D content. The browser has web browsing capabilities, voice chat, and basic instant messaging.

Beginner’s Guide to becoming a Video Game Designer

This guide is for absolute beginners who are trying to find a career that compliments their passion for playing games, technology, design, coding and digital art. You are the type of person who knows that finance and medicine really isn’t your thing. You also know you never want to wear a suit to work either. However, you need some help getting started. It’s also a great guide to share with your parents and guardians who are trying to understand what the hell you are rambling on about, and why you are so excited.

DOOM Hell’s armies have invaded Earth. Become the Slayer in an epic single-player campaign to conquer demons across dimensions and stop the final destruction of humanity. The only thing they fear… is you.

INDIEDB

How not to start a mod

CELIA HODENT — GAME UX CONSULTANT

User experience is an under-appreciated but crucial aspect of modern technology and software — particularly in the field of video games, which have to introduce complex ideas without intimidating players. In this week’s podcast, Chris is joined by UX Director, Celia Hodent, who put her PhD in psychology, specialized in cognitive development, to good use in helping create Fortnite.

Creating a Chinese CyberNeon Game Environment In UE4

My name is Junliang Zhang. I come from Shanghai, China. I am a 3D Environment Artist currently working at 3BLACKDOT in Los Angeles, USA. My passion for the game industry comes from my childhood. I still remembered how much I was setting in front of computer playing the most of classical PC games back to 90’s, such as, Starcraft, Commend & Conquer series, and Age of Empires II. When I usually playing these games I always wondered how they were made arts and gameplay or how I could make something cool mods. Therefore, My true interest is game art.

REALTIMEUK REBUILDS “GAME OF THRONES” CHARACTERS FOR “WINTER IS COMING”

How do you create photorealistic versions of the world’s most well-known TV stars from scratch? We asked RealtimeUK to break down its epic video game cinematic.

Over the course of eight years and 73 episodes, Game of Thrones has gone from cult TV show to cultural phenomenon. Based on George RR Martin’s A Song of Ice and Fire, millions of people around the world are now tuning in to find out about the fate of the Starks, Lannisters and Targaryens as the series draws to a close.

From artist to engine: revolutionizing the game art pipeline

How games are delivering increasingly innovative experiences. As an ever-evolving industry driven by creative technologies, games are always aiming to the future to deliver increasingly innovative and visually captivating experiences. Today’s 3D game artists aren’t just tasked with pushing polygons beyond the boundaries of previous generations; they’re helping to shape a whole new world of immersion across the AAA and indie landscape.

The sheer volume and variety of games hitting the market in recent years is staggering, thanks in large part to accessible tools like Unity and Unreal Engine lowering the barrier to entry and allowing more developers to create impressive projects in a fraction of the time. With studios of all sizes now armed with even more ways to streamline and shorten the development process, game artists benefit from new technologies and techniques that help them keep pace without sacrificing the quality of their work.

How to Make a Graduation Video-Game Project

For our inspirations, we did not want to find ourselves too quickly copying the artistic direction of a particular game, we avoided as much as possible to make reference to already existing games.

Our universe was created around multiple references from the reality and artworks made by independent artists. These 2 points were very important for us, on the one hand, we wanted to give credibility in our creations with our real references, and tint these creations with references from other artists, this without ever losing the essential points of an architecture of medieval city or castle as well as armour creation.

DESIGNING WEAPONS AND PROPS FOR GAMES

Hi, my name is Mauricio Llano a 3D game artist looking to break into the industry. I’ve done two internships, one with Lee Lanier for animation and VFX and the second in the amazing outsource company CGBot. At the latter is where I found my love for doing art for games. At first, I wanted to go for environment art. But then realized I needed to polish my skills on single assets first and what a better way to do it than doing Weapons and Props with Ethan Hiley. All of you that are thinking on taking any course, feel confident that it is well worth it. I had industry exposure and what you learn is the real deal and you learn amazing tricks from industry professionals.

GameDev Ep. 46: Dead by Daylight with Behavior’s Damien Devaux

This week, we chat with Behavior’s Damien Devaux, the principal Character Artist on Dead by Daylight. He speaks about his involvement on the project, including the new Leatherface DLC, as well as some of the techniques he uses on a day-to-day basis. Enjoy!

Indie horror games for a good fright

Halloween hasn’t really taken off in Australia as much as it has in the northern hemisphere, but that won’t stop costumer-lovers and scare-enthusiasts alike from celebrating! Whether that means dressing up, baking some tasty treats or hosting a movie marathon that’s bound to be a scream; the spooky season is here!

ITCH.IO is a simple way to find and share indie games online for free.

Video games historically are culturally colourless, Australians have a unique way and are now exploring history and storytelling that are uniquely Australian.

CG Society: BEHIND THE XBOX ONE X RELEASE TRAILER, WITH MATTHEW ENCINA

Microsoft created waves at E3 this year with the announcement of their new gaming console, Xbox One X. Kicking off the release was a spectacular trailer created by the crew at Blind. The minute we saw it, we knew we had to find out more from the team about how it was made. Below, Creative Director Matthew Encina offers an exclusive look inside the inspiration, process and tools of the momentous trailer.

EPIC GAMES Unreal Engine

If You Love Something, Set It Free. You can download the engine and use it for everything from game development, education, architecture, and visualization to VR, film and animation. When you ship a game or application, you pay a 5% royalty on gross revenue after the first $3,000 per product, per quarter. It’s a simple arrangement in which we succeed only when you succeed.

5 IndieGoGo projects to keep an eye on

Who says the creativity well has run dry? We’re still seeing a number of innovative entrepreneurs making waves in 2017. You’ll find a lot of them pitching their ideas on crowd-funding websites such as Kickstarter and IndieGoGo, where anyone can pledge some money to support new products and business ideas.

Battlefield 1 Making a Game on The Great War

WORDS OF WISDOM FROM SUCCESSFUL INDIE DEVS Looking to see what it takes to become a successful indie developer? You’ve come to right place. We spoke to ten game devs and asked for their words of wisdom on making it in the indie world.

10 OF THE SCARIEST EXPRESSIONS IN GAME DEVELOPMENT

Some games: Bioshock Infinite, LA Noire, Borderlands The Pre-Sequel, Flight Control, Fruit Ninja, Crossy Road.

OUR TOP GAME PICKS for 2017 iiNet

With a number of great games in the lineup, you might not know where to start when it comes to planning out your pre-orders or tactical secondhand purchases.

SPACEBAR 1962 Steve Russell

COMPUTER SPACE 1971 Nolan Bushnell, PONG 1972 Atari

PAC-MAN, PuckMan Tori Iwatani ARGO version, PONG,

ASTEROIDS, AMPED, ACCLAIM

BLOOD WAKE, BUBBLE BUBBLE

CRAVE, CAPCOOM, CENTIPEDE

DOOM, DEAD or ALIVE 3, DEFENDER, DONKEY KING, DIG DUG

FROGGER

GALAZIBAN

HALO

INTERPLAY

JOUST

LUCAS ARTS

MYST, MISSILE COMMAND, MOON PATROL

NAMCO

ODD WORLD: MUNCH’S ODDYSEE

PROJECT GOTHAM. POOYAN, PENGO

Q*BERT, QIX

SEGA, SIERRA ENTERTAINMENT, SHREK, SUPER MARIO BROS 3, SPACE INVADERS, SCRABBLE

TAKE-TWO, TIME-PILOT, TEMPEST

UBI SOFT

ZAXXON

MAME ENTERTAINMENT: originally stood for Multiple Arcade Machine Emulator. MAME’s purpose is to preserve decades of software history. As electronic technology continues to rush forward, MAME prevents this important “vintage” software from being lost and forgotten. This is achieved by documenting the hardware and how it functions. The source code to MAME serves as this documentation. The fact that the software is usable serves primarily to validate the accuracy of the documentation (how else can you prove that you have recreated the hardware faithfully?). Over time, MAME absorbed the sister-project MESS (Multi Emulator Super System), so MAME now documents a wide variety of (mostly vintage) computers, video game consoles and calculators, in addition to the arcade video games that were its initial focus.

MACMAME: is part of the MAME project, a non-profit organization dedicated to preserving the history of arcade videogames via emulation. MacMAME achieves this by running the original program code found in the arcade games. As such, it is much more than a reproduction, it is essentially that same game running via an emulated layer inside your Macintosh. On this site you’ll find the most current build of MacMAME, information about upcoming versions and instructions for using it. Please look around and enjoy reliving some of the games that made going to video arcades an enjoyable part of our pop culture. You can move to various parts of the site by clicking on the headings to the left.

Video Game Archive for ROM images to download and play with MAME

Original Xbox Retrospective: 2001 – 2002 (PART 1)