ARMORPAINT

ARTIFICIAL INTELLIGENCE (AI)

ARTIFICIAL NEUTRAL NETWORK

AUDIO

AUTODESK

AWARDS

ARMORPAINT

PBR Texture Painting | ArmorPaint is a stand-alone software designed for physically-based texture painting. Drag & drop your 3D models and start painting. Receive instant visual feedback in the viewport as you paint.

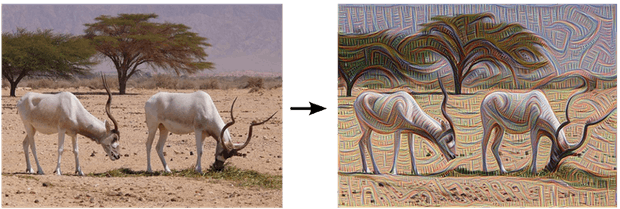

Armor Paint and Texture Synthesis

ARTIFICIAL INTELLIGENCE (AI) and Machine Learning

ChatGPT List of Lists: A collection of 3000+ prompts, examples,use-cases, tools, APIs, extensions, fails and other resources.

AI VOICE

- Is the Anthony Bourdain AI Voice in ‘Roadrunner’ an Ethical Lapse? Maybe So, but Documentaries Have Been Sliding Away From Reality for Years (Column)

- Documentary ‘Roadrunner’ Is Less A Portrait Of Anthony Bourdain And More A Petty Tell-All

- Anthony Bourdain’s AI-Faked Voice in New Documentary Sparks Backlash

- A Haunting New Documentary About Anthony Bourdain

- AI voice actors sound more human than ever—and they’re ready to hire

AI Answer Engines Are Worth Trying

RenderRam – An AI Tool That’s Actually Helpful

How To Manually Identify AI-Generated Text Without Using A ChatGPT Detector

AI VOICE GENERATOR

AI and The Trough of Despair

Nuno Silva – Using AI in Architecture Design For Best Result

Why your next computer may be a lab-grown brain

ChatGPT List of Lists: A collection of 3000+ prompts, examples,use-cases, tools, APIs, extensions, fails and other resources

How to Identify Good Uses for Generative AI Chatbots and Artbots

Creating Video from Text

Text-to-Video

Clones, cheats, and ChatGPT – How the AI revolution is reshaping education

Tyler Perry Puts $800M Studio Expansion on Hold After Seeing OpenAI’s Sora: “Jobs Are Going to Be Lost”

George Carlin Estate Settles Lawsuit Over AI-Generated Comedy Special

How AI rendering is revolutionizing architecture design

Exploring the use cases and applications of AI in the media and entertainment industry

Prompt on prompts: Creative studio talks AI

AI Tools: Runway AI World Jump Videos

Studios’ Now-or-Never Choice: Sue AI Companies or Score a Major IP Deal

MediaCentral – Fostering Creativity Through Artificial Intelligence

fxpodcast #361: A Deep Dive into the New Laws around Governing AI

Generative AI can free up creative possibilities

Prompt on prompts: Creative studio talks AI

Microsoft is bringing AI characters to Xbox

‘We need cool heads’: AI hype cycle leads discussion at GENR8

AI Tools Part 3: The Current State of Generative AI Tools

AI-Eye Contact killed the teleprompter? Almost!

Is AI video about to creep out of the uncanny valley?

The Future of AI in Video: a look forward

From muse to machine: How might AI realistically change the creative process

NerdyRodent – DragGAN AI: Interactive Point-based Editing Tool

How AI is transforming Hollywood, and why its at the center of contract negotiations

Exploring Cleancut AI’s Auto Editing Plugin for Video Footage

Vision Setting and Problem Solving: AI in Architecture Is Changing Design

Generative AI and The Future of Filmmaking

Holding onto the magic of human storytelling in the AI era

AI and journalism — get up to speed

Adobe’s AI Generator Firefly Looks To Reimagine Video and Audio Workflows

AI Tools Part 2: A Deeper Dive

Holding onto the magic of human storytelling in the AI era

If there’s one thing AI has done for us, it’s killed Zuck’s metaverse

‘The Music Industry Is Forever changed’ – Artists Use AI To Clone Drake & The Weeknd’s Voices

THE BUSINESS OF FILM EXPLAINED III : HOW THE WRITERS STRIKE AND A.I AFFECTS FILMMAKERS WITH STEPHEN FOLLOWS, GILES ALDERSON, DOM LENOIR AND PHIL HAWKINS

AI Tools: The List You Need Now

Making Intelligent Use of AI

Live Action to Anime with Corridor Digital

Artists Use AI to turn the Simpsons into Real-Live Humans

GENERATIVE AI IN MEDIA

What’s on the horizon for machine learning in 2023?

How will AI impact filmmakers and other creative professionals? – A PVC Roundtable Discussion

Harnessing the Power of AI Art

The Architects Designing Surreal Worlds with AI

Tapping The Gold, Vein Of Content, With AI and ML

This tool uses machine learning to animate 3D models on-the-fly, and it’s getting Unreal Engine support soon

Artificial Intelligence in Video Production

The Generative AI Revolution in Games

Intelligence Briefing: AI in Flim with Dr Mike Seymour

AI Generative Fashion Videos

10 Best AI Art Generators & Tools in 2023

5 Ways Artificial Intelligence Will Revolutionize Game Development

Unleashing the Power of the Artist with Machine Learning

Imagen is an AI system that creates photorealistic images from input text

Discover How Perfect Biscuits Are Made Using AI Technology

Unfold the Future of VFX with Machine Learning and AI Automation

Watch This: How Intelligent Content Advances Creativity

Top trends to come out of FMX 2022

A journey in machine learning, with Foundry’s Johanna Barbier

Harnessing the power of analysis, augmentation, and automation: Autodesk AI helps artists focus on their art

4pixos Academy – Stable Diffusion with Controlnet

Stable Diffusion is a deep learning text-to-image model released in 2022 for converting text into images based on diffusion techniques. It is primarily used to generate detailed images based on text descriptions, but can also be used for other tasks such as inpainting, outpainting, and the generation of image-to-image translations based on a text prompt. It was developed by researchers from the CompVis Group at Ludwig-Maximilians-Universitaet Muenchen and Runway with the help of a computational donation from Stability AI and training data from non-profit organizations.

A.I. Upscaling software shootout

Upscaling or “uprezzing” is something most editors do every day without thinking much about it. Drop in a 720p clip into your HD 1080p timeline and scale it up to fill the screen and move on. The issue is that simply scaling the clip looks soft. It might fail QC on a broadcast show or it might draw the ire of your client (even if they were the one who provided it!). If you have an eye for quality, you will not be happy to include lower resolution clips in your edit.

Discover How Perfect Biscuits Are Made Using AI Technology

Can artificial intelligence cook and prepare the food we eat every day? Well, you may be surprised to learn that AI is already cooking and preparing food in specialized smart factories. Today we look at a cookie factory that’s using state of the art AI computing to analyse their cookie production in real time, to make sure they produce perfect cookies.

Canny AI: Imagine world leaders singing

Deep Learning is really starting to establish itself as a major new tool in visual effects. Currently the tools are still in their infancy but they are changing the way visual effects can be approached. Instead of a pipeline consisting of modelling, texturing, lighting and rendering, these new approaches are hallucinating or plausibly creating imagery that is based on training data sets.

‘We still need humans to generate those stories’: How AI could influence screenwriting

Robots may have a long history of starring in movies but there is still some way to go before they make their presence felt as scriptwriters, according to researchers.

At next week’s Screen Forever conference, Associate Professor in Applied Mathematics at The University of Adelaide Dr Lewis Mitchell will share his findings that show the potential of AI to be used in the development process of scripts to tell original stories and predict box office success.

Microsoft has patented a chatbot that could imitate a deceased loved one, celebrity, or fictional character.

Microsoft has been issued a sci-fi-sounding patent for a chatbot that could take on another person’s personality and likeness.

TEST DIRVE A VIDEO CHAT

The COVID-19 pandemic has pushed companies to accelerate advances in video conferencing and the use of AI and machine learning. Two companies at the forefront of these advances are Pinscreen and NVIDIA. Both are using advanced Machine Learning and Generative Adversarial Networks (GANs) to advance what is possible while approaching the problem from very different points of view.

100,000 Faces Generated by AI Free to Download

We are democratizing creative photography and video. We aim to make creative works both more accessible and higher quality through generative processes. We are a team of about 20 AI and photography professionals.

There is a massive amounts of data that exists in the world that can be mined for unique information, searched, catalogued and analysed. The delivery of advertising is changing from analysing and with our search data this type of technology can and will absolutely change the way we do production.

Video Breakdown Video indexer builds upon media AI technologies to make it easier to extract insights from videos. Power new forms of content discovery such as searching for spoken words, faces, characters, and emotions. Enrich your apps with embedded video insights to drive user engagement.

Digital Domain Previews New Autonomous Digital Human, ‘Douglas’

LOS ANGELES, Calif. – November 19, 2020 – Today, Digital Domain announces “Douglas,” the most realistic real-time autonomous digital human in the world. Currently in development, Douglas was designed to break down the barriers in human-to-machine interactions, yielding conversations that feel natural and easy. With unmatched facial realism, Douglas is chameleon-like in its ability to switch faces, providing future customers with even more flexibility when it hits the market in 2021.

Disney’s deepfakes are getting closer to a big-screen debut

How close are deepfakes to being used in big-budget films and TV shows? Pretty damn close, if a new demo from Disney is anything to go by. In a video and paper being presented at a computer graphics conference this week, researchers from the House of Mouse show off what they say is the first photo-realistic deepfake at a megapixel resolution.

RetrieveGAN AI tool combines scene fragments to create new images

Researchers at Google, the University of California at Merced, and Yonsei University developed an AI system — RetrieveGAN — that takes scene descriptions and learns to select compatible patches from other images to create entirely new images. They claim it could be beneficial for certain kinds of media and image editing, particularly in domains where artists combine two or more images to capture each’s most appealing elements.

ANDY LOMAS — ARTIST AND LECTURER IN CREATIVE COMPUTING

Andy Lomas has always been fascinated with math and filmmaking. On graduating from Cambridge he founded a video production company, then worked on VFX for movies including The Matrix Reloaded and The Matrix Revolutions. At the same time, he explored his passion for CG art, making use of render farms in their downtime.

In this podcast, Andy goes into detail on his artwork and how he uses technology, including deep learning and NVIDIA’s OptiX API, to create unruly but controllable plantlike and cellular forms. He talks about how he brings together influences from nature, physics and biology, as well as his work on Katana at The Foundry and his current lecturing post at Goldsmiths, University of London.

GLENN DERRY — VIRTUAL PRODUCTION EXPERT

Glenn Derry began his career in practical effects, creating advanced puppets for movies, including A.I. Artificial Intelligence. As CG took over filmmaking, he transferred his skills into visual effects via films such as The Aviator, Real Steel, The Jungle Book and Avatar — for which he coined the phrase “virtual production.”

In this discussion with Chris, Glenn talks about the rise of virtual production in filmmaking and his role in making it happen, as well as how real-time and rear-projection, combined with the COVID-19 pandemic, have the potential to transform the movie-making process. He also talks about Technoprops, the company he set up to create bespoke motion-capture systems, and how he’s introducing a new generation of directors to old-school filmmaking techniques.

Future proof your content creation with machine learning

Machine Learning (ML) is impacting the VFX, and other industries, in a number of different ways. More recently, one of the areas in which it has started to come into fruition is content creation. The recent progress of deep learning techniques allows hours of manual and laborious content creation work to be done in minutes. This isn’t just true for the VFX industry but in applications such as gaming, Virtual Reality (VR), advertising and even retail.

Written And Directed By: Artificial Intelligence

As impossible as it seems, it won’t be long before artificial intelligence is writing and creating films. As a lead up to this eventuality, here is a list of just some of the creative endeavors that AI has already accomplished.

MACHINE LEARNING FOR ARTISTS | The Foundry

Machine learning (ML) is one of the hot topics in the world of visual effects (VFX) at the moment. Over the past few years, we’ve seen the impact it’s started to have on the VFX industry and the technology that has emerged because of it. In fact, it almost seems like there is a new ML tool or program being released every other week, all promising to improve the way artists work, create and collaborate. So, amongst the many, what are the key upcoming tools that you, as artists, should watch out for?

Edward Saatchi on virtual beings: AI is the next great art form

Edward Saatchi, the CEO of Fable Studio and maker of Emmy-winning virtual reality experiences, participated in a thoughtful conversation about “virtual beings” at our recent GamesBeat Summit 2020 event.

I called the session “We are who we pretend to be,” after the moral of story in one of my favorite novels, Kurt Vonnegut’s Mother Night. The novel is about an American spy in World War II who does too good a job at his cover role of being a Nazi propagandist. The moral is: “We are who we pretend to be, so we must be careful about what we pretend to be.”

Are you ready for the AI business revolution?

Ask people what they think about the rise of artificial intelligence (AI) and there’s every possibility they’ll conjure fearful images of Arnie in his infamous cyborg role.

When it comes to what AI means for Australia’s businesses, however, the reality is far less threatening… and much more of an opportunity.

Outside the realm of Hollywood, AI isn’t the threat of self-aware machines replacing humans. Instead, it’s the rise of a supercharged tool helping real people – real business people – make sense of big data so they can make smarter business decisions. The dramatic aspects come in both macro form – its impact on the global economy – and the more personal: allowing SMEs to take on even the largest competitor.

ARTIFICIAL INTELLIGENCE IN THE ENTERTAINMENT INDUSTRY

The advent of artificial intelligence (AI) in media and entertainment will help uncover innovative ways of creating and sharing content. Artificial intelligence has always been a part of pop culture due to numerous sci-fi movies like Avengers: Age of Ultron, The Terminator, and I, Robot. But, what if artificial intelligence can be used in producing a movie? Artificial intelligence has already shown its potential in the music industry by creating beautiful compositions. Whereas, the world of art has also witnessed the creative mind of AI. Now, the advent of AI in media and entertainment will transform how audiences experience movies, TV shows, videos, games, and sports. Research suggests that media industry professionals believe that AI is the next industrial revolution. Media professionals have realized that AI will improve productivity by automating multiple repetitive tasks and offer a competitive advantage. AI-based automation can help entertainers and content creators spend more time on their craft and deliver engaging content. AI will also help production houses make informed decisions about marketing and advertising by analyzing critical data.

Still in its Infancy, AI Can Do Good or Bad or Both

We just don’t to get much satisfaction out of an answer just being handed to us. The best things we’ve learned is when we had to do it ourselves…think, sweat, swear, squirm, try, stumble and suddenly BAM! solved. Actually, we prefer it when you go through all of that trial and error and we learn from our mistakes. Personal struggle to a defined end is better and often it wasn’t even the answer we thought we were aiming for but turns out it’s even better. Even though Amazon is eyebrow deep in developing/refining/using AI, Bezos admitted in his recent shareholder letter that the company would make a number of costly mistakes going forward because that’s what people do to succeed.

Artificial Intelligence: Friend or Foe?

In the Academy Award-winning film “Ex Machina,” audiences are drawn into a trusting relationship between Ava, an artificial intelligence (AI)-powered robot, and its creators, Caleb and Nathan. That is, until Ava murders Nathan and leaves Caleb trapped in the laboratory while she flies away in a waiting helicopter to enjoy a life outside of walled confines.

Style-based GANs – Generating and Tuning Realistic Artificial Faces9 min read

Posted on by Rani Horev Generative Adversarial Networks (GAN) are a relatively new concept in Machine Learning, introduced for the first time in 2014. Their goal is to synthesize artificial samples, such as images, that are indistinguishable from authentic images. A common example of a GAN application is to generate artificial face images by learning from a dataset of celebrity faces. While GAN images became more realistic over time, one of their main challenges is controlling their output, i.e. changing specific features such pose, face shape and hair style in an image of a face. THIS PERSON DOES NOT EXIST

How data analysis is improving matchmoving

In filmmaking, complex techniques are driven by complex ambitions. This is particularly true of matchmoving, which allows computer generated imagery (CGI) to be inserted into live-action footage while keeping scale and motion correct.

AI FOR MEDIA AND ENTERTAINMENT nvidia

Thomas Middleditch Let an AI Steal His Face to Make a New Movie

Behind the scenes with the actor Thomas Middleditch who teamed up with an artificial intelligence program called Benjamin to create “Zone Out,” an entirely AI-made sci-fi film.

Would you buy shoes designed by a computer?

Dries van Noten is a household name in fashion circles and has been a mainstay on the Paris ready-to-wear circuit for many years. His high-end collections mean you’ll only find pieces in luxury boutiques or department stores like Bergdorf’s, Liberty and Selfridges but there is a subtle drama which has awed me for the longest time, back to my fashion school days; the unexpected way his designers blend prints, the delicate combination of machine and hand embroidery; reversible bomber jackets with silk linings. Each piece meticulously unique from the next that it seems only the collective characters from the material designer through to the pattern cutter and sewing machinist could have collaboratively defined the vision.

ARTIFICIAL INTELLIGENCE IN MEDIA

Artificial Intelligence, A.I., is a hot topic in many industries with Media and Entertainment poised to embrace more intelligent data management and automation in the coming years. Media companies have lead the explosive growth of big data technologies because it enabled them to drive digital revolution, exploiting more fully not only data which was already available but also new sources of data such as content from social media sources and user generated content. Media companies are increasingly looking to generate revenue from delivering content and data through diverse platforms and products. Increased and better integration of solutions along the data/content value chain will be fundamental in order to convince decision-makers to invest in new technologies such as Artificial Intelligence.

Artificial Intelligence In Education: Don’t Ignore It, Harness It!

“Human plus machine isn’t the future, it’s the present,” Garry Kasparov said in a recent TED talk.

And this “present” is transforming the world of education at a rapid pace. With children increasingly using tablets and coding becoming part of national curricula around the world, technology is becoming an integral part of classrooms, just like chalk and blackboards.

And now virtual reality (VR) and artificial intelligence (AI) are gaining traction. A recent report by Pearson deciphers how artificial intelligence will positively transform education in the coming years. Per the report’s authors, “The future offers the potential of even greater tools and supports. Imagine lifelong learning companions powered by AI that can accompany and support individual learners throughout their studies – in and beyond school – or new forms of assessment that measure learning while it is taking place, shaping the learning experience in real time.”

Artificial Intelligence Comes to Hollywood By Debra Kaufman / April 18, 2017

Is Your Job Safe?

Last September, when the 20th Century Fox sci-fi thriller Morgan premiered, artificial intelligence (AI) took center stage for the first time not as a plot point but a tool. The film studio revealed that it had used IBM’s Watson — a supercomputer endowed with AI capabilities — to make the movie’s trailer. IBM research scientists “taught” Watson about horror movie trailers by feeding it 100 such trailers, cut into scenes. Watson then analyzed the data, from the point of view of visuals, audio and emotions, to “learn” what makes a horror trailer scary. Then the scientists fed in the entire 90-minute Morgan. According to Engadget, Watson “instantly zeroed in on 10 scenes totaling six minutes of footage

Survey: Broadcast Pros Set Sights On AI, Hybrid Storage

Cloudian conducted in-person interviews with more than 300 broadcast professionals at the NAB Show. SAN MATEO, CA.—Broadcast professionals responding to a survey at last month’s NAB Show foresee increasing reliance on AI and machine learning as well as hybrid cloud storage and a falloff on their reliance on tape-based storage in their future.

Deep learning: the new frontier in visual effects production

Artificial intelligence (AI) and deep learning technologies are disrupting our world in unprecedented ways. From transport and infrastructure, to marketing and fintech; self-learning machines are increasingly being deployed to challenge the status quo.

ARTIFICIAL NEUTRAL NETWORK

AI ENGINE ARRANGES & EDITS FILM

MAKROPOL is an XR production studio, focused on cinematic storytelling using immersive media. We are currently in development with 10+ immersive narratives, envisioned by professional film directors, artist and performance groups. Our goal is to be the leading XR studio in the Nordics and lead the way for a sustainable cinematic XR industry.

Reflector uses an autonomous AI engine to arrange and edit film in continuous real time. The artists are using their Reflector installation to question the potential role of machines in film production and the future of film as an art form. It will be interesting to see the results.The State of Video and AI 2018 The machines aren’t taking over; they’re just helping video publishers achieve their goals more efficiently and effectively. Video AI (artificial intelligence) has the capacity to solve a number of time-consuming, video-related problems with automation. But that doesn’t mean it has magical powers that will exclude human control. To offer a sense of where video AI is in early 2018, what follows are a number of real-life examples in which AI is helping to add structure to the unstructured world of video.

Is there a market for AI art? This $600k painting suggests there is

We know AI can learn to write like Shakespeare and compose pop songs — and now, an original painting generated by AI has sold for more than 38 times its expected price. In a world-first event in New York last week, Portrait of Edmond de Belamy — a blurry picture of a man in a dark frock coat — was auctioned off by Christie’s for $610,000.

Artificial neural networks (ANNs) or connectionist systems are computing systems inspired by the biological neural networks that constitute animal brains. Such systems learn (progressively improve performance) to do tasks by considering examples, generally without task-specific programming. For example, in image recognition, they might learn to identify images that contain cats by analyzing example images that have been manually labeled as “cat” or “no cat” and using the analytic results to identify cats in other images. They have found most use in applications difficult to express in a traditional computer algorithm using rule-based programming.

A Basic Introduction To Neural Networks. The simplest definition of a neural network, more properly referred to as an ‘artificial’ neural network (ANN), is provided by the inventor of one of the first neurocomputers, Dr. Robert Hecht-Nielsen. He defines a neural network as:

“…a computing system made up of a number of simple, highly interconnected processing elements, which process information by their dynamic state response to external inputs.In “Neural Network Primer: Part I” by Maureen Caudill, AI Expert, Feb. 1989

Artificial Neural Networks have spurred remarkable recent progress in image classification and speech recognition. But even though these are very useful tools based on well-known mathematical methods, we actually understand surprisingly little of why certain models work and others don’t. So let’s take a look at some simple techniques for peeking inside these networks.

Yes, androids do dream of electric sheep. Google sets up feedback loop in its image recognition neural network – which looks for patterns in pictures – creating hallucinatory images of animals, buildings and landscapes which veer from beautiful to terrifying

How Image Recognition Works. I nterpreting the visual world is one of those things that’s so easy for humans we’re hardly even conscious we’re doing it. When we see something, whether it’s car, or a tree, or our grandma, we don’t (usually) have to consciously study it before we can tell what it is. For a computer, however, identifying a human being at all (as opposed to a dog or a chair or a clock, let alone your grandmother) represents an amazingly difficult problem.

Inceptionism: Going deeper into Neural Networks. Google Photos.

Journey through the layers of the mind

AUDIO

AUTODESK

AREA

Online community for the fields of animation, games, film and tv, design visualisation and advertising

Best of AU 2020 Speaker Awards

Great speakers are an essential part of the AU learning experience. They inform and inspire, helping others discover new possibilities on their professional journey. AU wouldn’t be AU without them. We’re excited to present the classes and speakers that took top honors at AU 2020, our first global digital event attended by more than 100,000 professionals.

Media & Entertainment 2020 Sessions

Modern animation and VFX production aren’t just pushing new boundaries for media creators. They’re reshaping how stories are told across design industries. Learn how.

Max Man & Maya Man

How Autodesk and NVIDIA are blazing AI trails together

We’ve been working with NVIDIA for more than 15 years, a long-time collaboration between technology companies. NVIDIA has been busy hosting their GPU Technology Conference (GTC) and we’ve been reflecting on the most exciting aspects of our work together. Here’s a quick look at what’s happening now and some of our future predictions because it’s definitely an exciting time to be in tech.

HOW TO START THE AUTODESK NETWORK LICENSE MANAGER AUTOMATICALLY ON MACOS X

Autodesk Network License Manager is used for multi-user licenses for Autodesk products such as Flame, Maya and Arnold. When installed on macOS X, the default behaviour of the license manager is that it does not start automatically on boot. This is most likely undesirable. Searching the Autodesk Knowledge Base reveals 2 methods. Method #1 only works for OS X 10.10 (Yosemite) and earlier. Method #2 adds a .profile that is run whenever terminal open. This works, but not very elegant, especially if you use terminal a lot.

Autodesk Acquires Assets of REALVIZ

FROYOK

Autodesk has joined 3D open standards body the Khronos Group.

The firm will join Khronos Group’s 3D Commerce Exploratory Group and its 3D Formats Working Group, where it will “support the … glTF file format”

Autodesk University – Learn On-Line

Autodesk University Las Vegas was just the beginning

Explore hundreds of new sessions from AU Las Vegas 2019, including class recordings useful downloads and much more.

Create 3d Characters: MAYA: GENERALIST INTERMEDIATE

University: Las Vegas 2015 sessions

Maya Monday – Render Passes in Maya 2016

3ds Max 2016 Quick Tips: Template System – Customize an existing Template

Autodesk 123D is free software integrated with content and fabrication services. For people who want to make things themselves.

AREA NEWSLETTER

Supercharged Animation Performance in Maya 2016

ALIAS, Autodesk

AREA TV

AUTODESK AREA NEWSLETTER

The MCG Network Deployment Guide

VR Magic and Horror in Left-Hand Path

Strange Company used Autodesk 3DS Max, Mudbox, MotionBuilder and the Unity engine to create VR RPG game, Left-Hand Path. Autodesk caught up with Hugh Hancock to get behind the scenes of this horrifying VR experience. Left-Hand Path is the VR RPG game you’ve been waiting for, that is, if you’re brave enough… Heavily inspired by the Dark Souls series, Left-Hand Path places you in a fantastic landscape leaving you to fight terrifying (and difficult!) monsters while learning magic. We caught up with Hugh Hancock to get behind the scenes of this horrifying VR experience.

Selectives in Action

This series covers the new Selectives features which allow you to perform image isolations in a quick and efficient manner. All uses including look development, VFX enhancement, beauty work and motion graphics. CAN .MAX FILES BE CONVERTED TO OBJ?????? There is some confusion around this …………..

- 3ds max can convert to any format

- maya can open a .3ds conversion to .obj, fbx can only be done out of Max

- directly between Max/Maya via SendTo, but no for Maya import/open a .max file

- maya’s option to import files in non-native formats

- max can save fbx, readable in maya then all textures connections are lost and need to reconnect

- newer versions of maya will recognise .max files

Discover the World of Maya: A Showcase of Student Projects

Autodesk M&E Webinars Watch webinar recordings

ALIAS

Product design software for sketching, concept modeling, surfacing, visualization

BACKBURNER

Autodesk Backburner is a free software application packaged with 3DS Max, Flame, Maya and a range of other Autodesk products. … In setups with multiple networked machines, Backburner lets you render images more quickly and efficiently by breaking the job into smaller parts.

FLAME and FLAME ASSIST

Joel Osis

Flame is the high-end 3D visual effects software that offers tools for fast, interactive 3D visual effects, finishing, compositing, advanced graphics, colour grading, conform, editorial, and look development. Provides tools for 3D compositing, visual FX, and editorial finishing. Featuring the Flame Desktop, a multi-layer timeline, conform, media management, and interactive performance, Flame provides the tools you need in an integrated creative environment.

The Flame Learning Channel

FLAME USER GUIDE

FLAME DOWNLOAD

What’s New in Flame 2023

Camera Analysis Manual Track and Motion Vectors Quality (What’s New in 2022.1)

Meet Flame 2021

I’m excited to announce that Flame 2021 is here, bringing even more speed and flexibility to compositing, color grading, and finishing workflows. Building on the machine learning-powered features first introduced in Flame 2020, this update adds more uses of AI – this time for isolating features on the human face. By popular demand, we’ve also added a new GPU-accelerated Physical Defocus effect as well as a slew of finishing enhancements to make it easier to adjust looks across many shots, share updates with clients, and create beautiful work, fast. Those of you doing finishing work for streaming services will also now enjoy powerful new workflows for certified Dolby Vision HDR authoring and display.

Autodesk Flame Continues AI Integration for Colour, Finishing and VFX

The Autodesk Flame Family 2020.1 update continues to extend the machine learning-powered tools developed for Flame 2020. These include the Z Depth Map Generator and Human Face Normal Map Generator announced at NAB 2019. Released for Flame, Flare and Flare Assist, the 2020.1 update adds more ways to isolate and modify common objects in moving footage for colour grading and compositing, and includes a semantic keyer for sky extraction. For example, users can isolate skies, remove clouds using depth extraction and add treatments to sky backgrounds.

VENICE, CA — Out of House VFX (http://outofhousevfx.com) is a visual effects and finishing studio that works on both commercials and feature films. Long-time Autodesk Flame user/VFX artist David Stern recently took some time to share his insight into the post production business and the shifts he’s witnessed in technology.

Treehouse Edit Gets an Edge on Finishing with Autodesk Flame

Treehouse Edit post production studio runs a small but tight operation in Dallas, Texas with a talented team of ten artists – editors, motion graphics artists and finishing artists. They have attracted clients such as the Richards Group, largest independent branding agency in the US, and landed commercial jobs for Russell Athletic, Toyota, 7 Up and others. Included on the team are editor Peter Tarter, who launched the company in 2012, and Autodesk Flame specialists Bryan Bayley and John Fegan, among others.

5 reasons why Coffee & TV use Flame as their hub. As small post companies get bigger, and big companies wonder what’s coming next, Derek Moore gives us 5 reasons why Coffee & TV use Autodesk Flame as the central hub of their Soho facility.

FLARE

Expand your digital compositing capabilities with Flare. Exclusively available to Flame and Flame Premium customers, Flare includes tools for 3D compositing, tracking, text, masking, keying, and relighting. Flare is included in Flame Premium.

LUSTRE

A high-performance, real-time color grading solution for creative look development. Lustre gives artists greater creative control over final color and grading for television and film projects. Interoperable with Flame, Lustre is included in Flame Premium.

Lustre is a color grading software developed by Autodesk. It runs on Autodesk Systems certified hardware, as Flame Premium, Flame and Smoke Advanced. It is part of the Flame Premium package. Lustre was originally a 5D product called Colossus, developed by Colorfront. After the demise of 5D in 2002, Autodesk acquired the license to distribute the Lustre software, and later acquired Colorfront entirely.

Lustre originated as a plugin for Autodesk’s Flame product under the name “Colorstar” to emulate film type color grading using printer lights controls. It was then developed as a standalone software. It was introduced under the Colossus name in private demonstrations at IBC show in Amsterdam in 2001. Alpha and beta testing were held at Eclair Laboratoires in Paris. During the trials, Colossus was running on the Windows XP operating system, but the same code base was also used on the IRIX operating system.

The original creators of Lustre are Mark and Aron Jaszberenyi, Gyula Priskin, Tamas Perlaki, Gabor Forgacs, Ferenc Bechtold.

Lustre is now integrated in the Flame Premium package.

MARI

Lubitel Camera 166 – Texturing in Mari

MAYA

Uses a node-based architecture allowing full animation control of all aspects in a scene. A selection of menus based on a user selection of Modelling, Rigging, Animation, FX, Rendering. Modelling with NURBS and Polygon Tools. Artisan brush interface for sculpting, painting textures and attributes onto NURBS and Polygons. Non-linear editor, inverse kinematics, character skinning, advanced deformation tools and general keyframing tools. Paint effects for 3D scenes. Fully integrated particle system with rigid and softbody dynamics, Film-quality rendering and advanced visual effects. Open interface for customisation and scripting.

The World’s Largest Marketplace of Motion Assets, Now in Maya

Imagine being able to drag and drop an animation file created by the motion capture studio behind Star Wars: The Last Jedi right into your Maya project. Well, that’s now possible… At GDC 2019, we announced that we were partnering with Rokoko, the company behind the world’s largest marketplace of motion assets to develop a plugin exclusively for Maya. And, in under a year, we’ve done just that! Maya 2020.1 adds a native plugin for the Motion Library, making it easier for you to access high-quality motion capture data – right in Maya.

TOOLFARM With modeling, animation and motion graphics workflow enhancements and new features, Maya 2018 is now faster and more efficient than ever.

Autodesk Maya Help 2015: Edge Ring and Edge Loop Selection Tips

Autodesk Maya University videos

Possible to change words of text object in Maya?

A Maya Fluid Dynamics Quicksheet: Even though this was written for Maya 7, it should still apply to current versions of Maya.

MICHAEL COMET I am currently a Character Modeling/Rigging Supervisor at Pixar Animation Studios. I started at Pixar around 2006 and have worked on films such as Up, Brave and The Good Dinosaur. Check out his tutorials, Maya MEL Scripts and Maya Plug-ins

MAYA 3D TUTORIALS FOR BEGINNERS, INTERMEDIATE AND ADVANCED USERS

What is the difference between parenting and grouping in maya?

MAKING OF DAENERYS TARGARYEN BY DANIELE LA MURA

Brutalist Office: Building an Environment in Maya From a Concept Art

Harnessing the power of analysis, augmentation, and automation: Autodesk AI helps artists focus on their art

Tips, tricks and tutorials on Autodesk maya, mental ray and more. With this blog we want to share the big or small discoveries we make during our everyday work.

FROYOK

Maya -Transferring UVs from “repair” object to animated/rigged original

If you use the standard method of selecting the objects and going to Mesh -> Transfer Attributes, then the result is a matching set of uv’s which are tied to the source object, and will be applied after deformation and skinning, meaning they will slide when deformed. There is, however a solution, which is to add them to the Original mesh node which, by default, is hidden. Once this has been achieved, you can safely delete the history on the Original node thereby severing its dependency on the source object, and applying the uv’s before any deformation thereby preventing sliding.

Animal Logic launches USDMAYA Plugin as Open Source

Sydney, Australia, 2 August – Animal Logic today announced the open source release of its USDMaya plugin (known as “AL_USDMaya”). The plugin enables powerful and efficient authoring and editing of 3D graphics data using Pixar’s Universal Scene Description (USD) in Autodesk Maya. Animal Logic launches USDMAYA Plugin as Open Source

FROYOK: [maya] froRetopo 3.5 : a fast and easy retopology tool | June 24th, 2013 Cat : Maya, Technical, Tutorial |

For this project I wanted to learn more about Xgen in Maya. I was curious to try it since Autodesk announced it in the 2014 release and this seemed the right chance to give it a try!

Autodesk Webinar: Entertainment CreationSuite 2014 – link

Rooster Teeth Artists, Gio Coutinho and Shrivas Shyamsundar share some Maya tips and techniques they use for the hit web series, RWBY.

SOME GENERAL INFO THAT I HAVE READ ALONG THE WAY

To modify pivot position in Windows, use the INSERT Key, on Mac use the HOME key.

To select multiple attributes in Windows, use the CTRL key, on Mac use the COMMAND key.

Procedural architecture, dependency graph, nodes with attributes that are connected. Nodes with attributes that are connected.Corrupted Scene – Forum

Consider saving an empty scene out of Maya (also as a .ma file) and then open this file – along with your ‘broken’ one in a text editor, and copy/paste your scene data over in small chunks – till you find the point at which the file won’t load anymore.

MMB

Go to window – setting preferences – interface: mouse scroll wheel and uncheck enable.

SOME EXPORTING ISSUES that were posted on DLF

error message – Unsupported transform node with multiple shape nodes

transform nodes are dagNodes that are used to group and transform other dagNodes. All dagNodes that are not transform nodes in Maya must exist as a child of some transform node.

– The scene has a transform with multiple shape nodes under it in the dag, Put another transform under it with another shape in it. Make that transform be 0 0 0

– Verify in the outliner by choosing show shapes and checking the hierarchy. Add a identity transform to one of the shapes if you see multiple.

– Make sure Show Shapes is enabled (RMB and first tickbox in the Outliner). Expand all your geometry to find which DAG Object it is that has one shape node. Then can either delete one of the shapes, if either happens to be unnecessary, or you could transfer one to an empty Transform node, if you need both. If you need to transfer it, first deselect everything, and Ctrl+G to create a null. Then select the Shape node you wish to move, followed by the null, and enter in the script line at the bottom/or script editor: parent -s -r; Hit Enter/Run, and the shape node will be re-parented to the new transform node. This technique is also useful for creating multi-curved custom controls. If it happens that your deformer on the geometry gets broken, an alternative would be to Duplicate Special, with Duplicate Graph enabled, to give separate copies of the deformer(s), or Input Connections so they still use the same deformer(s), and then delete one shape node from the original, and the other from the duplicate. And if you have trouble getting the animation out with FBX, just use an Alembic.

– After exporting in FBX, Maya shows a dialog saying that the plug-in will export the texture as a Lambert. In the new scene and import the exported file, the animation is there but the texture and lighting are gone. The bach bake didn’t work.

– FBX doesn’t always work out, often end up with nulls or non-functional versions of nodes you try to export between different software packages. Textures are assigned to shaders’ attributes, and need to be re-assigned in other software however the shading groups will still exist. Can easily re-create the shaders, and there should at-least be nulls with the same name where your lights were. When importing to another Maya scene, export/import .mb or .ma files.

– Exporting the animation and re-import it into another program there are odd spikes coming out of the hands and crotch.

– Consider baking the frames in maya first or try export as fbx 2012.

– Selecting the vertex, then check its joint allocation in the Component Editor (Maya can associate with another joint outside weight-map proximity). Locate and make (0/1) changes. Alternatively, Export Skin Weight Maps and manually paint the affected joint in Photoshop or similar, then import back to Maya index file (text) that will have been generated with the initial export.

– Use weight hammer tool to bash those pesky verts back into place, then try exporting again with FBX

– Could be that you have a broken rig, meaning a modular rig where limbs are in groups rather than parents of joints. remember most applications like unity dont like broken rigging, prefer an entire skeletal structure with a root bone.

– Possibly a skin weighting issue. Often the rogue verts show up after export to engine when they look fine in Maya. First of all the skin weighting mode should be interactive and not post (this is default in Maya but just check). This normalises the values to a maximum of 1 for each vert. Check the values of influence on the rogue verts, select them and go to: Windows > General Editors > Component Editor. If you look in the Smooth Skins tab you should see a table of all the influences the vert has to corresponding joints. Check that the influence looks as it should i.e. as this is the hand, then should be to the hand joint or finger joint. You can change the values in the component editor also.

Maya 2017 – Time Editor Overview

Interactive Morphing Table Experiments by Michael Marcondes

Maya 2015 – XGen Instancing Overview

GOLAEM CROWD PlugIn

Golaem makes it easy and affordable to populate worlds with smart & directable digital characters, from a few to thousands, in a short amount of time and with limited budget. Built with the artist in mind, Golaem automates repetitive and cumbersome tasks to let them focus on their art. The Golaem workflow is built so that although it relies on AI, artists always get their last word to say and can keep total control of the results.

Golaem Crowd 4 Workflow Overview

BIFROST

- Create an Explosion Using The Aero Solver in Maya Bifrost

- AREA Welcome to Valhalla, your source for Bifrost-related tutorials, techniques, tricks, hacks and ideas! by Adrian Graham

Introducing Bifrost for Maya

I’m happy to announce that Bifrost – a new visual programming environment in Maya – is here! Using Bifrost, you can create your own custom effects, publish, and share them with other artists to use across different shots, scenes, and even shows. We’re also rolling out a ton of pre-built graphs to help you get started creating effects like dust storms, fire, snow, and sand super fast. This project has been a labor of love for my team, but it wouldn’t be what it is today without the dedicated support of our band of beta users. I’d like to give a huge shout out and thank you to all of you – Without your help, this would have been a very different release!

CREATING REALISTIC WATER, TIPS & TRICKS WITH ALEKSANDAR NADJ

Hi there, my name is Aleksandar Nadj, I’m a CG generalist from Novi Sad, Serbia. Currently, I work in a gaming company called Eipix Entertainment as lead 3D artist. Software and tools which I use in my workflow are Maya, Zbrush, Realflow, Quixel Suite, Substance painter, Photoshop, After Effects and Nuke, and I try to learn and implement Houdini. In this article, I will show you the workflows and techniques that I used in my latest project Lonely Beach. This is my first Bifrost Project:

lonely beach

Painting Bifrost colorsets

XGEN

A BRIEF GUIDE ON GROOMING HAIR WITH XGEN IN MAYA We will be using XGen’s Interactive Groomable Splines (IGS) to create our hair. We start with selecting a patch of faces that will make up our scalp to generate the hair description from.

NVIDIA – Mental Ray

MOTION BUILDER

MOTION BUILDER is 3D character animation software Capture, edit, and play back complex character animation in a highly responsive, interactive environment. Work with a display that’s optimized for both animators and directors.

MUDBOX

Online Resources (Digital Sculpting with Mudbox)

MUDBOX LIVE Wayne Robson

KERMACO Ara Kermanikian

SHOTGUN

Maya and Shotgun inside “Ghost in the Shell”

“We handled around 100 shots in Rupert Sanders’ Ghost in the Shell. Normally on a film project, it’s either lots of subtle, invisible effects or all big explosion effects but with this one, there was a good range of scenes that we worked on that involved a nice variety of work.”

–Kevin Baillie, Atomic Fiction

SMOKE

Post Magazine – Review: Autodesk Smoke 2015

By: Barry Goch

Smoke 2013 for the Mac – Getting Started online tutorials

Smoke Learning Channel

Autodesk Smoke 2013 | Key Insights by Rob O’Neill

Digistor Autodesk Smoke Solutions Webinar

Autodesk Smoke and Flame artist Joel Osis does a breakdown on the effects for a commercial he did for Special K – link

Smoke Essentials with Alexis Van Hurkman

Amsterdam SuperMeet: Autodesk Smoke Goes to the Movies

Premiumbeat com Presents Editing in Autodesk Smoke Special Guest Brian Mulligan, WHTR TV

Autodesk Smoke 2013 | Key Insights by Rob O’Neill

SOFTIMAGE

We regret to inform you that the upcoming 2015 release will be the last one for Softimage® software. This final version is expected to ship on or around April 14, 2014. Autodesk will continue to offer product support until April 30, 2016. We will also provide Softimage support services (including Hot Fixes and Service Packs) to all Softimage customers with Autodesk Subscription, at no cost, until April 30, 2016.

STINGRAY

Autodesk Launches Stingray Game Engine at GDC Europe 2015 It was becoming clear that Autodesk was entering the game market when they purchased BitSquid back in June of last year. In addition to making the Magicka series of games, they also created the BitSquid game engine. In March of 2015 Autodesk announced that Bitsquid was now the Stingray Game Engine.

Autodesk Levels Up, Will Release Stingray Game Engine Autodesk announces new Stingray gaming engine with simplified mobile development testing The program, called Stingray, is based on the Bitsquid engine that it acquired last year, but is entirely revamped with a new, more intuitive interface.

Autodesk launches Stingray game engine Up against strong competition, First announced at GDC 2015 in March, Stingray is based on the in-house engine of Swedish developer Bitsquid, which Autodesk bought last year. At the time, it seemed an intriguing move. Although used on a number of Windows and PlayStation titles, Bitsquid was still a relatively little-known engine. And with Epic Games having just removed the rental fee for Unreal Engine 4, and Unity Technologies announcing a new, more fully featured free edition of Unity 5, Autodesk faced a daunting prospect. It had to launch a new commercial engine against two better-established competitors, both of them free to use. So what has Stingray got that its rivals haven’t?

Introducing the Autodesk Stingray 3D game engine

3DS MAX

3ds Max and Arnold Bring Interactivity and More Speed to 3D Workflows

Chaos Corona – Dive into the Corona Tile Map For 3ds Max

RenderRam – How to Conform Bending Plant Leaves in 3ds Max 2024.2

D95 Design – Frame Maker script for 3DS Max

Ask animators about the difficulties of simulating cloth in 3D and their tales of woe will fill up a whole book. For a while, 3D cloth simulators raised some hope in providing a fast solution to the problem, but most of them failed to deliver the real goods. Creating simple tasks such as simulation of a cloth falling on a rigid object was simple, but if you were to animate a character’s clothes, you’d hit a real dead-end. I’m happy to report that Digimation’s Stitch Lite, a plug-in and a cloth simulator for 3ds max is one of the few solutions that really delivers.

3ds Max 2021 Adds PBR and Texture Workflows, Python 3 Integration

Autodesk 3ds Max 2021 has a fully scriptable texture baking workflow and is simpler to install. Viewport configuration and rendering are improved, in particular to support PBR rendering for real time applications, and support for Python 3 is integrated.

Texture baking in 3ds Max 2021 has been updated to support physically-based rendering (PBR), map override and OSL (Open Shading Language) workflows. Using a new baking to texture process, artists can represent complex surface effects as simple 2D bitmaps and then assign them to objects.

Up Close & Personal with 3ds Max

How to create a realistic kitchen interior in 3ds Max: Part 1

3ds Max Tips & Tricks with Martin Coven

Getting Started: Touring the UI

3ds Max Projects Raising the Bar for 3D Modeling and Visualisation

AVID

Let’s Edit with Media Composer – Lesson 13 – Bin View Modes

How to answer when someone asks you to move a project from Avid to Premiere Pro (or vice versa)

Avid Media Composer 2020

Avid Media Composer has been at the forefront of nonlinear, digital video editing for three decades. While most editors and audio mixers know Avid for Media Composer and Pro Tools, the company has grown considerably in that time. Whether by acquisition or internal development, Avid Technology encompasses such products as storage, live and post mixing consoles, newsroom software, broadcast graphics, asset management, and much more.

MediaCentral

Whether you’re a team of two, an organization of thousands, or anything in between, MediaCentral accelerates media production. This scalable, open platform offers a powerful suite of creative tools, media asset management, and the ability to tailor and expand its capabilities, enabling teams to collaborate from anywhere, using any device. Streamline your operation. Create better content faster. And deliver to more outlets and platforms to maximize your brand’s value and media.

ASSET SHARING WITH PREMIER PRO

No editor should be an island—no matter what video editing software they use. Break down content silos, enable collaboration, and deliver content faster with integrated asset management and shared storage for Premiere Pro users.

HOW HARD IS IT TO SETUP AND CONFIGURE AVID | EDIT ON DEMAND?

Short answer is, not very. Apart from choosing a region for your deployment, the deployment process and underlying cloud infrastructure layer is abstracted from the user. This makes it incredibly simple as no knowledge or experience with cloud computing services is required, nor any account with a cloud computing service.

Interview: Jason Cowan and AVID Media Composer with “Edit on Demand”

With Edit on Demand being a remote based work environment, what are the benefits, if any, over traditional “all in the same office” sort of workspaces And in Australia, we despite Government promises, we still have a disparate internet system, with some on fibre to the home, the majority on fibre to the node, some on satellite internet connectivity and yet others still on ADSL. Will all of these happily co-exist with Edit on Demand?

Professionally correct color and master projects faster with the Media Composer | Symphony Option. This add-on is included with Media Composer | Ultimate subscriptions and available as an option for Media Composer subscriptions and perpetual licenses, expanding your editorial palette with a host of creative tools.

Stylize shots, set a mood, or correct color with advanced and new shape-based color correction tools. And master projects in the highest quality possible, with delivery to multiple formats from a single master source.

POWERING GREATER CREATORS FROM ANYWHERE

Yesterday, remote work was a nice-to-have; today, it’s essential. Avid is providing these resources to help media production teams affected by the coronavirus transition to remote workflows.

NAB-AT-HOME 2020: Avid Media Composer 2020.4

The new Avid Media Composer 2020.4 is now shipping.

As we continue our social distancing coverage of things that would have been introduced at NAB 2020 I sat down with Michael Krulik from Avid Technologies and he walked us through this deep dive of the brand new Avid Media Composer 2020.4. We got a great look at the new update in a recent Let’s Edit With Media Composer – What’s New In Media Composer 2020.4 here on PVC.

AVID PREPARE YOUR EDIT SYSTEM FOR CATALINA

If you’re on Mojave, you’ve no doubt been seeing all of the warnings about 32-bit apps losing their support once “the next macOS” gets released. Well, now you’ve seen the news. This autumn, probably in the first couple of weeks in October, macOS Catalina will be released. I know, I know… Most of you will be smart and not dive head-first into a “dot zero” release of anything and good for you but sure enough, macOS 10.15 will indeed end all support for 32-bit apps. Now, what does that mean in a practical sense? It’s not as bad as you think really. If you, the Mojave users of the world have come this far, then there are only a few things to consider, but you NEED to consider them ASAP because although it won’t affect your NLE apps much, it may affect the ancillary apps you rely on.

AVID MEDIA COMPOSER – AUTO SAVE, THE ATTIC AND YOU By Shane Goddard

There’s nothing worse than working on a sequence and hitting a show stopping error and needing to restore a project to recover a sequence from your last nightly backup (your projects ARE backed up nightly, aren’t they?)

Let’s Edit with Media Composer – Creating Night from Day with BCC and Sapphire 10By Kevin P. McAuliffe September 19, 2017Post Production, Topics, Uncategorised

As pretty much everyone knows, I’m a big user of both BCC and Sapphire. I just worked on a project this morning that utilized effects from both packages, and the same goes for just about every project that I work on, and I thought that we would have some fun in this lesson, and take a look at how to create a shot that I see tons of tutorials about on the internet, and that is how to create a night shot from a day shot. It’s actually a pretty simple technique if you have the right tools, and BCC and Sapphire are definitely the right tools for this job. Many people will watch this tutorial and think “Hey, I can do this without 3rd party effects!”, but stick around until the end to see why you’re going to want to use these awesome tools to create this cool effect! Enjoy!

IT Editor and Mixer Use Avid Creative Tools to Resurrect a Classic Horror Film The post-production team behind IT needed seamless film editing and sound mixing workflows to deliver the right tone and feel to an updated horror classic on a time-constrained schedule.

Avid Ingest and Delivery Workflows