‘It is so important that we see things that are about us, that talk to us, that make us think about our lives’

Gillian Armstrong, The Age, 18 September 2017

Cloud editing costs demystified

The Biggest Bloopers and Editing Errors That Have Been Caught in Major Films Throughout the Years

When it comes to film production, the technology used on set has evolved so much that you would think it would be impossible to make a mistake. However, despite the state of the art cameras and all the other equipment, some movie mistakes actually end up on the big screens. We all love watching bloopers and funny videos of actors who forget their lines but we don’t expect them to miss out on important details during the set. We’re not sure how some of these mistakes could go by unseen but they have.

Managing the Post Pipeline Webinar

Want to learn more on the Post Pipeline Solution? Join a Live Session with Matt Schneider and Ray Thompson the 13th of May and ask your questions.

3 ways post-production professionals can build a personal brand

Today I want to talk to you about something that I hazard to guess isn’t spoken of a lot on this site. Building your personal brand. I know what you’re probably thinking: “‘Branding? What the hell does building a personal brand have anything to do with cutting! I’m an editor, not a Harvard Business Graduate! As long as I’m a good editor, that’s all that should count.” And in a perfect world, I’d agree with you. But, last time I checked, the world is far from perfect (you need only take a brief look at the day’s news to figure that out.) Here’s the thing: if the only thing you have going for yourself as a production professional is your NLE and storytelling skills, you could be missing out opportunities that could get you more business and higher fees. Building a professional brand could be the best move you make in 2020 for your career. I’d like to share with you three ways in which you can do it.

Career Advice: Working as an Editor in the Animation Industry in Australia

5 Fundamental Editing Techniques

A POST PRODUCTION BLUE PRINT: By Joel Goodman

Read this article about Internet video editing and then TikTok

The cloud paradox: is cloud-based post-production a future out of reach?

The potential benefits of a post-production pipeline in the cloud are irrefutable. As we touched on in our last article in this series, cloud technology has the ability to completely remove that tricky planning stage – where studios are forced into ‘finger in the air’ judgements on big decisions like workforce and compute capacity – while enabling global collaboration in real time.

Edit Royale 2020 – In an Edit Suite Far Far Away!

Carly Anne Kenneally, Matt Hodges, Bettina Otterbeck, Angus Roche and Keaton Stewart explored the material and supported each other while tapping into their creative best. Working on systems of their choice across PC, Mac, Adobe Premiere Pro, DaVinci Resolve and Avid Media Composer, it was fascinating to see the different ways the competitors approached their work.

Ideas for editors and post-production people while in isolation or quarantine

It may seem like a no-brainer to learn something new while you’re isolated in downtime. NAB has canceled. You local restaurants and businesses may or may not be shuttered. Your downtime may be a self-imposed (or government-imposed 🤨) quarantine but it might also be because your jobs canceled or your place of employment has temporarily shut down. What seems like an eternity of free downtime can easily get taken up by endless binge-watching of any number of multi-season television shows but I’m going to suggest a few things that might be more productive. Some of these are my own ideas, some I’ve overhead and some come to us via Twitter.

Blackbird’s Moment Arises as Video Industry Embraces Cloud-Based Collaborative Editing

Zixi partners with Blackbird for global live video editing

8 April, 2020 – Waltham, US and London — Zixi, the industry leader for enabling dependable, live broadcast-quality video over any-IP, and award winning architect of the Software-Defined Video Platform (SDVP), today announced a partnership and integration with Blackbird, the world’s fastest, most powerful professional cloud video editing and publishing platform that provides rapid access to video content for the easy creation of clips and highlights to multiple devices and platforms.

Moving from AVID to Premiere Pro: How’s 45 minutes sound?

One of the most frustrating things for editors switching software is having to sit through all the newbie, “editing fundamentals” instruction that comes with most online courses. Well, if you happen to be an AVID editor wanting to move over to Premiere Pro, we have a better option. moviola.com has just released a new, free transition guide to get you up and editing in Premiere Pro in around 45 minutes of instruction, taught by provideocoalition.com’s Scott Simmons. Just the stuff that matters to you; no patronizing lectures on what a lower third is.

Talking remote editing with the expert Michael Kammes

As we watch the spread of the coronavirus continue so does the talk about remote editing and editing at home so I wanted to talk remote editing with an expert on the subject, Michael Kammes. I’ve had calls about this, I’ve been messaged about it and I’ve seen tons of discussion online about it. I know a bit about some ways to edit remotely but not enough to answer all of the questions I had been receiving. I thought to myself… who knows the most about this topic than anyone else on the planet and that was an easy answer in my friend Michael, of 5 Things fame.

When it comes to editing video, no-one wants a bandwidth hog

Since #WorkingFromHome has become the norm, the internet’s capacity to accommodate a sudden and sustained shift in demand is being stress tested. To be precise, it is residential fixed-lines which are under pressure as never before as we stream more daytime videos of live fitness training and children’s activities to the TV.

AUSTRALIAN SCREEN EDITORS | COVID-19 ADVICE TO MEMBERS

We will keep updating this page and documents

PERCEPTION ON THE 18 MONTHS THEY SPENT WORKING ON BLACK PANTHER’S FUTURE TECH

But Black Panther presented new challenges because the African nation of Wakanda—Black Panther’s homeworld—is far more technologically advanced than any society in the Marvel Cinematic Universe. In this case, Perception collaborated with Marvel Studios for 20 months and used Cinema 4D, X-Particles, Houdini and Redshift to design, develop, animate and render the visionary technology seen in the film and end title sequence.

POWERING GREATER CREATORS FROM ANYWHERE AVID

Yesterday, remote work was a nice-to-have; today, it’s essential. Avid is providing these resources to help media production teams affected by the coronavirus transition to remote workflows.

Turning raw footage into engaging content in post-production is no easy feat. Learn basic editing philosophy and practical tips for both the big and small screen.

How Adobe and Isilon OneFS Deliver Rock Solid Collaboration for Post-Production

Good collaboration leads to better work! Giving artists the tools to collaborate is one of the ways of making the post-production workflow more manageable and predictable. This has been the Isilon story from the start and is highlighted in Dell’s recent work with Adobe and the new Productions workflow in Adobe® Premiere® Pro.

Adobe Creative Cloud deployment on Virtual Desktop Infrastructures (VDI)

Adobe has collaborated with Microsoft Azure, Amazon Web Services, Google Cloud, Teradici, and NVIDIA to provide best practices for Adobe Creative Cloud for enterprise customers who deploy on Virtual Desktop Infrastructure (“VDI”) on remote cloud environments.

Adobe Photoshop, Adobe Premiere Pro, and Adobe After Effects, including Adobe Media Encoder and After Effects Render Engine are constantly being tested by Adobe in cooperation with each cloud vendor, enabling technology partners, third-party plug-in developers, and hardware peripheral providers. These Best Practices Guides will be regularly updated as VDI technology advances.

THE POWER OF INSTANT COLLABORATION

Remote collaboration made easy Built for post-production teams that need to find footage fast, collaborate easily, and create stories quickly, MediaCentral | Editorial Management delivers powerful yet simple workflow and media management. Completely integrated with Avid NEXIS storage and Media Composer. Editorial Management enables teams to search for media phonetically, log clips, edit video, pull selects, create stringouts and review projects from anywhere, using just a web browser.

Studio in The Cloud, Media Consumption Rises, Cloud Editing Rises

What is Vimond IO?

Vimond IO is a cloud-based collaborative storytelling tool, specially made to meet the new demands of post video production. The tool is made for broadcasters, sports & news organizations and media outlets of all stripes that are challenged to meet the demands of an increasingly competitive and evolving media world. With Vimond IO you can remotely produce high quality, frame-accurate video stories at any time, from any location, together.

EDITING

Lies Producers and Editors Tell each other

and

- I’ll be there at _______AM (or PM)

- I’ll be there no later than…….

- Trimming that shot by one frame really made the difference

- It really looks great, but what if we ………

- I’m positive I got this shot on THIS tape

Ripple Edit vs. Insert Edit FAQ

Editing deliverables is a time-consuming process, but the right tools can reduce the time required. The new Ripple Edit tool from CineXtools lets you insert new content that’s longer or shorter than your original piece, add/remove black anywhere in your file, and just like our original insert edit feature, does not require re-encoding your original file. That means the QC you’ve previously completed is still good.

Mobile Apps for: Video Compression App – video editing app, PowerDirector – video editing app, FilmoraGo – video editing app, iMovie, Adobe Premiere Clip

Vary your video composition: 10 types of camera shots engaged throughout the storyline because of the range of camera shots in the film

ART OF THE CUT

- Avengers – Infinity War editor, Jeffrey Ford, ACE

- editors of “The Handmaid’s Tale”

- with Oscar nominated editor, Lee Smith, ACE on DUNKIRK

- assistant editors and VFX editors of Kingsman: The Golden Circle

- WITH JABEZ OLSSEN ON EDITING “ROGUE ONE”

- WITH “KONG” EDITOR, RICK PEARSON, ACE

- with “IT” editor, Jason Ballantine, ASE The unique rhythms and challenges of editing horror and suspense

- on MURDER ON THE ORIENT EXPRESS

- ART OF THE CUT with Ant-Man and the Wasp’s Craig Wood, ACE

- ART of THE CUT with KATE SANFORD, ACE EDITOR of “BOARDWALK EMPIRE” & “VINYL”

- with RED SPARROW’s Alan Edward Bell, ACE

- ART OF THE CUT with the editor of “War for the Planet of the Apes”

- Post Production

- with Oscar nominee, Jay Cassidy, ACE on editing “A Star is Born

William Hoy, ACE got into the editor’s seat on feature films back in the mid-‘80s. One of his first major feature films was Dances With Wolves. Since then he’s edited a string of box office and critically acclaimed hit films: Star Trek VI: The Undiscovered Country, Patriot Games, Se7en, The Man in the Iron Mask, The Bone Collector, Dawn of the Dead, Fantastic Four, 300, Watchmen, and Dawn of the Planet of the Apes. Art of the Cut caught up with William recently to discuss his work on War for the Planet of the Apes.

ASE EDIT ROYALE 2022 REPLAY

ASE ELLIES 2022 NOMINEES

THE AUSTRALIAN SCREEN EDITORS GUILD IS PLEASED TO ANNOUNCE

THE FOLLOWING NOMINEES AND WINNERS FOR THE 2022 ELLIE AWARDS

ASE EDIT ROYALE 2019 REPLAY

Five emerging editors competed to show off their editing artistry and speed. Each edited a complete music video from unseen media in only two hours. The editor whose edit was voted the best edit of the night was Emily Clulow using Avid Media Composer on Windows. Congratulations Emily.

Editing iPhone HDR Video in Final Cut Pro

Non-editors are editing in HDR, and you could too

ASE EDIT ROYALE 2023 REPLAY

Daily Life of an Editor — Interview with Alex Macdonald

Creativity and technical skills go hand-in-hand for computer graphics (CG) professionals, and the industry itself offers a range of specialisations. A talented studio team can consist of riggers, animators, compositors, generalists, and of course, editors. An editor’s role is to manipulate videos, arranging footage shot-by-shot in a way that best entertains or informs an audience. They collaborate with producers and directors to create that final, stunning masterpiece. Editors are often the backbone of production.

Jill Bilcock: The Art of Film Editing

A unique look at the film artistry of renowned Australian film editor Jill Bilcock. Jill Bilcock is one of the world’s leading film editors, yet to most people she remains relatively unknown.

Jill Bilcock: Dancing The Invisible

The art and life of one of the world’s most acclaimed film editors. Jill Bilcock: Dancing the Invisible is an unprecedented feature documentary about one of the world’s leading film artists – Australian film editor Jill Bilcock.

48 Professional Editors Explain How to Edit Videos Faster

Ever get that feeling that your video edits take FOREVER. That you must be the slowest editor alive…that every deadline you’re set feels like doomsday? While there’s no getting away from the fact that video editing is generally quite a brutal, time intensive process (just ask anyone that does it for a living – we salute you!), there ARE things that you can do to become more efficient and win back valuable time on each project. How do I know? Well it’s not because I’m some sort of super, duper video editor (I can’t edit for s***) or because I know of some magic video editing software. It’s because we recently spoke to almost 50 professional editors and asked them to share their secrets on “how to edit video faster?” This article is the result of those insightful conversations.

For a TV Editor, 500 New Shows a Year Require a Fresh Script

What tech tools are most important for your work as a television editor? Most important is the one in my title: a television. Though the pure tonnage of content dictates that I almost always have a new show going — on my laptop or a second monitor at work, occasionally my iPad or iPhone — I still vastly prefer watching TV on TVs. Both of the ones I have at home are attached to Apple TV consoles. With its fourth generation, the system and, more important, the touch pad remote finally advanced to the point where I was willing to commit.

A day in the life of an online editor on a live show is a day of balancing.

Do I take the time to do this “the right way,” or is “good enough” going to have to suffice in order to make air? It’s a day of calculating; a typical two- to four-minute package takes a certain amount of time to color, polish and prep. It’s a day of assessing: What’s the likelihood of things happening within the timeframes needed, and what do I do when those timeframes are compromised? I must have backup plans in place.

Behind the Scenes of Netflix’s Trollhunters Written by Editor Graham Fisher

I come from a background in documentary editing, which, on the surface, is the polar opposite of animation editing. In documentary you will be given two hundred hours of footage and told to go make something. There’s no script and the story is found in the edit. Discovering the power of editorial in that environment, I came to animation with the sense that nothing is set in stone and that perhaps the director and I are Dr. Frankenstein, and all the various components of the episode from storyboards, to dialog, to sound and music are the various organs. It’s our job to knit them together in a way where our monster can come to life.

Award-Winning Sherlock Editor Unlocks the Secrets of Editing

For Miles, it all began with a love of cinema and television. He declares legendary director David Lean’s inspiring movies as a big influence. “I can’t tell you how many times I’ve watched The Bridge on the River Kwai.”

What makes a hot-shot editor or great edit?

What makes a hot-shot editor or great edit?

The first Sydney Edit Royale event, hosted by Digistor, is available on-demand here.

Five emerging editors competed to show off their editing artistry and speed. Each edited a complete music video from unseen media in only two hours. The editor whose edit was voted the best edit of the night was Marlena Ianni using Avid Media Composer on PC. Congratulations Marlena!

Fitness & Editing Hollywood Blockbusters with Alan Bell, AC

How Editor Paul Machliss Cut Baby Driver in Real Time on Location

My brother and I were fortunate enough to stumble upon Machliss’s presentation at NAB 2017 at the Avid booth. He discussed Baby Driver being the most difficult edit he’s ever had to manage. He also talked about his previous experiences working with director Edgar Wright on Scott Pilgrim vs. the World, The World’s End, and Spaced. You may also recognize his comedic editing style from other television series, like The IT Crowd.

Making the Cut: Chris McCaleb—Cracking the Code

POST MAGAZINE: Editing ARRIVAL

Joe Walker has established himself as the go-to editor for directors Denis Villeneuve and Steve McQueen. His relationship with McQueen includes cutting 12 Years A Slave (2013) and his short film Ashes (2014), while Villeneuve’s films include Sicario (2015) and more recently, Arrival. He’s currently working with Villeneuve on next year’s release, Blade Runner 2049, and recently took a short break to speak with Post about his career, his work with the director and how he achieved the final cut for Arrival, which has taken in more than $80 million since it debuted in mid November.

The ASE is a national organisation formed in 1996. It has members across Australia as well as overseas. The Guild is an association of professional screen editors and assistants working in a wide range of disciplines including: Feature Films, Documentary, Television, Commercials, Corporate Video, Short Films, Music Video, Multimedia, Online Content, Education and Training

Nashville Filmmakers Guild NLE Debate, 3 local filmmakers taking the stage and each representing their NLE of choice: Adobe Premiere Pro CC, Apple Final Cut Pro X and Avid Media Composer.

Long Form and Episodic Workflow Guide

How to Organize a Feature Film Edit Like a Pro

Transitions: Voices on the Craft of Digital Editing

Useful Tools for Editors: NAB 2022

Useful Tools for Editors By Scott Simmons 11.21.15

A Student Interview with Alan Edward Bell A.C.E. link

Ryan Axe: The Role of the Assistant Editor

Behind the Scenes with Gravity link

Digistore Australia

Editing – the chemistry behind the cut of Breaking Bad

Film and Video Post-Production technology explained – link to videos

Full workflow for editing the #1 Feature Film, “War Room”

ROLAND GALOIS’ Top 3 Tips for Emerging Editors

SUBTITLES:

HOW TO BURN SUBTITLES INTO VIDEO: If you get you captions as a standard captions file (.srt, .ass, etc) then you can do it with ffmpeg, or one of the gui versions of ffmpeg. You can combine the compression to whatever output format you’re creating into the subtitle burning stage. So for example if you wanted to create a good quality mpeg4: ffmpeg -i input.mov -c:v libx264 -preset slow -profile:v main -crf 20 -pix_fmt yuv420p -vf “subtitles=yoursubtitles.srt” output.mp4. SUBRIP: Leave it as a separate SRT file, most TV’s now would just play ‘movie’ with a ‘movie.srt’ file in the same folder…Or as with vast number of mkv files, use

mkvtoolnix to merge in the srt file into the mkv file, you’ll still retain vid/audio/subs tracks, not burnt in.

SUBTITLE EDIT: is a free (open source) editor for video subtitles – a subtitle editor. Has OCR for corrections, edit/resync any subs. Export stl/scc formats ? if so,

then read it in and you can edit it like a text editor….then you can save it out as another bunch of other formats.

CHOCOLATEY Software Management Automation: A package manager like apt-get on linux or home-brew for mac. A script that will install it for you, and from then you can add tools like ffmpeg by just typing choco install ffmpeg. Unfortunately ffmpeg doesn’t seem to like .stl or .scc subtitles, but it’s pretty easy to translate stl to srt using a word-processor and find and replace (or sed / awk on the command line).

CODEC:

You need to find out what the actual Codec is. You can do this by finding an application that can query the MXF.

For example: http://www.opencubetech.com/page20/XFReader

Or if you know the camera it came from you can determine the format this way. If the files are from a camera that has a plugin for premiere then you should just be able to download the plugin from the camera vendors website.

A satirical look at the process of video editing that delves into areas not usually covered in your typical instructional video – the cycles of despair, procrastination and accomplishment that many editors experience

It aims to promote, improve and protect the role of editor as an essential and significant contributor to all screen productions.

INSTANCING

When a shot or clips are instanced, the same source clip is used including the same animation curve. When the source clip, including animation is modified the instance clip is also modified. Instance clips are generally used to reduce the calculation time, clips that are instanced take lesser time to calculate than clips that are copied.

Animation Instancing – Instancing for SkinnedMeshRenderer

Adobe Animate: Adding and editing nested Symbol instances

HOW TO EDIT A VIDEO SEQUENCE

WHAT EXACTLY DO YOU PUT IN A SEQUENCE

Five shot sequence: Tutorial and example

Clip 2: Five-Shot Method

How To Edit A Video In SEVEN EASY Steps

Jill Bibcock: The Art of Film Editing. Focuses on the life of Jill Bilcock, one of the world’s leading film artists and Academy award nominated film editor. Features commentary from Cate Blanchett, Baz Luhrmann and Rachel Griffiths.

“Editing is the essence of cinema.” Francis Ford Coppola

“Relating a person to the whole world: that is the meaning of cinema.” Andrei Tarkovsky

“The film editor is the gatekeeper to what the audience gets to see.” Peter Greenaway

- Have to establish style, the most important thing. Way above sensational. Wild, innovative, unusual and visually extraordinary making something emotional.

- Having hundreds of options, what emotionally touches you, edit for structure and rhythm, guiding the audience’s perspective through where they look, how are they going to look and how they will feel about that moment.

- How much backstory and character detail do you need? A bit more detail about the character to invest in the character.

- What to do in chronological narrative and how that will relate to audience.

- How to make something travel from A to B, is about rhythm, delivering a story, the best way to deliver that story depending on style and what the content is that has been supplied.

- Great sense of observation, extraordinary amount of patience, tune into how a director sees their vision and put something together that even surprises the director.

- A director that has something to tell that no one else has told or can tell something in a way that has not been told in that style or context before.

- Create a bit of magic in the workplace in order to create magic. Live the vision.

- Teams only get boring when one member stops growing, pushing yourself into uncertainty. Nourish creative life, bring together the strengths creating something new.

- Language that is all about story telling.

- Using style to keep the rhythm, creating devices to create the rhythm. Creating patterns out of chaos and uncertainty. Conducting the orchestra and also playing all the instruments.

- Sometimes overt and sometimes invisible, not only about the large explosions also about the internal explosions that people might have. Understand the character with the same ownership as the characters do.

- Parallel stories and making you believe them.

- Know when to get out, what do you want to achieve by the end of this and to know that you have go it.

- Creating the tension between the visual and the rhythm is what makes exciting cinema. Rhythm, classical or chaotic. Cutting style that does not interfere. People want to communicate, a story that connects and emotionally touches the audience. It is the audience in the end and the editor is responsible for that.

Mie Moth-Poulsen, ‘Can you Cut It? An Exploration of the Effects of Editing in Cinematic Virtual Reality’

The vast development of Virtual Reality (VR) displays and 360 degree video cameras has sparked an interest in bringing cinematic experiences from the screen and into VR. However, Cinematic Virtual reality is a new and relatively unexplored area within academic research. Historically editing has provided filmmakers with a powerful tool for shaping stories and guiding the attention of audiences. However, will an immersed viewer, experiencing the story from inside a 360 degree fictional world, find cuts disorienting? This question, founded two iterative studies investigating the application of editing in Cinematic Virtual Reality and if this causes disorientation for the viewer.

The vast development of Virtual Reality (VR) displays and 360 degree video cameras has sparked an interest in bringing cinematic experiences from the screen and into VR. However, Cinematic Virtual reality is a new and relatively unexplored area within academic research. Historically editing has provided filmmakers with a powerful tool for shaping stories and guiding the attention of audiences. However, will an immersed viewer, experiencing the story from inside a 360 degree fictional world, find cuts disorienting? This question, founded two iterative studies investigating the application of editing in Cinematic Virtual Reality and if this causes disorientation for the viewer.

This paper details two studies exploring how cut frequency influences viewers’ sense of disorientation and their ability to follow the story, during exposure to fictional 360° films experienced using a head-mounted display. The results revealed no effects of increased cut frequency which leads us to conclude that editing need not pose a problem in relation to cinematic VR, as long as the participants’ attention is appropriately guided at the point of the cut.

TALK

Editing 360 deg VR to guide the viewers attention can be too disorienting for the viewer, even experiencing motion sickness and there needs to be some kind of adjusting.

Does editing work in 360 deg films, will it work in VR?

Remember The Lumière Brothers Arrival of the Train where people thought it would come out of the screen and hit them so they ran out of the cinema in fear.

Arrival of a Train at La Ciotat (The Lumière Brothers, 1895)

What is the average cut length for the right pacing and how can this be applied to cinematic VR? How to join shots together to produce the narrative? With new mediums it takes some time and effort for user experiences.

Traditional editing – the cuts to be true to emotion, to the story, the rhythm, eye trace, 2d plane of the screen and 3d space. Still need to created smoother transitions and avoid disorientation.

With cinematic virtual reality things start to shift around, the effects of scale change and need to consider staging, setting, lighting and editing in the VR world.

Shot two films, for 360, two 180 deg films that are stitched together. Need to considering the camera placement, thinking about the camera as a person and to be as natural as possible for testing and not make the participance more disoriented. Later introduced a third camera.

Lighting is another challenge because when the two images are stitched together the exposure is different affecting the user experience.

Editing is no longer done frame to frame, it is done roll to roll and gives production challenges. Need to consider the shot length, does being cut on the action still work, what about when cutting from one world to another world.

Uses to look around the world, not guid the attention too much, having a concept of presence about being in another place and have the cuts match the viewers attention.

Premiere Pro offers support for viewing VR video in the Monitor panels. It also detects if the clip or sequence has VR properties and automatically configures the VR viewer accordingly. You can publish VR video directly to the web from Premiere Pro to sites such as YouTube or Facebook.

How Star Wars was saved in the edit

3 Mistakes All Beginner Editors Make

State of the NLE: Which Editing Software is Best?

Final Cut Pro vs Adobe Premiere: Best Video Editor?

The Rule of Six

IN THE TRENCHES clip CUTTING ON MOTION

Testing the new Grease Pencil tools for upcoming Blender 2.73

Red Giant – go to 1 December 2014

Autodesk 2015 Releases

Nuke 8 Recording of Life Digital Event

LTO Archiving for Digital Media

The Great Gatsby – VFX before and after

EDIT SHARE

EDITSHARE 2020 WEBINARS ON-DEMAND

Since Digistor’s webinar “Flow Asset Management – Features & Case Studies”, EditShare has completed a number of other webinars which may interest you. Watch on-demand.

EditShare enables media professionals to collaborate on projects thanks to our ever-expanding range of innovative products and features, all designed to improve production and unleash creativity. Integration and collaboration are central to all products in our comprehensive range of media management solutions – from ingest to archive.

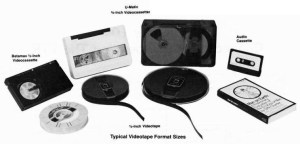

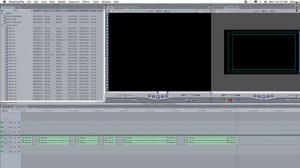

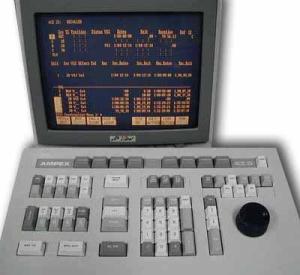

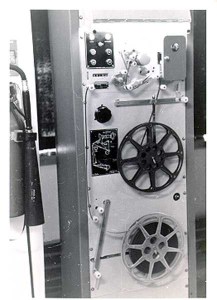

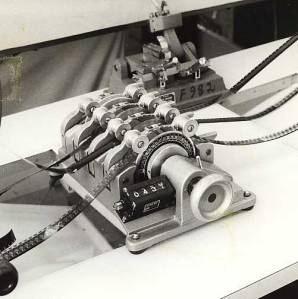

SOME EDITING SYSTEMS OVER THE YEARS

Media 100 Suite Now Available FREE, New macOS Sierra Support

STEENBECK > VIDEO TAPE > SHOTLISTER > HEAVYWORKS, LIGHTWORKS > FINAL CUT PRO > QUANTEL

LIGHTWORKS

IN THE SPOTLIGHT: Jill Bilcock

Shotlister – Cinema Papers No 70 November 1988 Page 6

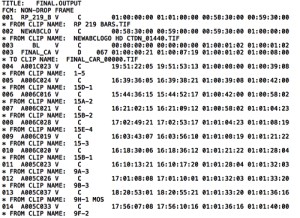

Template-based edit decision list management systems. These days, linear video editing systems have been superseded by non-linear editing (NLE) systems which can output EDLs electronically to allow autoconform on an online editing system

Edit Decision Lists or EDL is used in the post-production process of film editing and video editing. The list contains an ordered list of reel and timecode data representing where each video clip can be obtained in order to conform the final cut. EDLs are created by offline editing systems, or can be paper documents constructed by hand such as shot logging.

SMPTE TIME CODE – VIRTUALLY UNCHANGED AFTER ALMOST 50 YEARS

Double-system recording is a form of sound recording used in motion picture production whereby the sound for a scene is recorded on a machine that is separate from the camera or picture-recording apparatus.

A flatbed editor is a type of machine used to edit film for a motion picture. Picture and sound rolls load onto separate motorized disks, called “plates.”

Audio editing with double system audio

The dramatic ways that technology has changed editing

- nagra

- steenbeck